Writing a pipeline to upgrade an existing VMware Tanzu Operations Manager

This how-to-guide shows you how to create a pipeline for upgrading an existing Vmware Tanzu Operations Manager VM. If you don't have a Tanzu Operations Manager VM, see Installing Tanzu Operations Manager.

Prerequisites

Over the course of this guide, you will use Platform Automation Toolkit to create a pipeline using Concourse.

You need:

- For upgrade only: A running Tanzu Operations Manager VM that you would like to upgrade

- Credentials for an IaaS that Tanzu Operations Manager is compatible with

- It doesn't matter what IaaS you use for Tanzu Operations Manager, as long as your Concourse can connect to it. Pipelines built with Platform Automation Toolkit can be platform-agnostic.

- A Concourse instance with access to a CredHub instance and to the Internet

- A GitHub account

- Read/write credentials and bucket name for an S3 bucket

- An account on the Broadcom Support portal

- A MacOS workstation with:

- a text editor of your choice

- a terminal emulator of your choice

- a browser that works with Concourse, like Firefox or Chrome

gitinstalled- Docker installed

It will be very helpful to have a basic familiarity with the following. If you don't have basic familiarity with all these things, you will fine some basics explained here, along with links to resources to learn more:

While this guide uses GitHub to provide a git remote, and an S3 bucket as a blobstore, Platform Automation Toolkit supports arbitrary git providers and S3-compatible blobstores.

Specific examples are described in some detail, but if you follow along with different providers some details may be different. Also see Setting up S3 for file storage.

Similarly, in this guide, MacOS is assumed, but Linux should work well, too. Keep in mind that there might be differences in the paths that you will need to figure out.

Creating a Concourse pipeline

Platform Automation Toolkit's tasks and image are meant to be used in a Concourse pipeline.

Using your bash command-line client, create a directory to keep your pipeline files in, and cd into it.

mkdir your-repo-name

cd !$

This repo name should relate to your situation and be specific enough to be navigable from your local workstation.

!$ is a bash shortcut. Pronounced "bang, dollar-sign," it means "use the last argument from the most recent command." In this case, that's the directory you just created.

Gather variables to use in the pipeline (for upgrade only)

If you are upgrading, continue with the following. If not, skip to Creating a pipeline.

Before getting started with the pipeline, gather some variables in a file that you can use throughout your pipeline.

Open your text editor and create vars.yml. Here's what it should look like to start. You can add more variables as you go:

platform-automation-bucket: your-bucket-name

credhub-server: https://your-credhub.example.com

opsman-url: https://pcf.foundation.example.com

This example assumes that that you are using DNS and host names. You can use IP addresses for all these resources instead, but you still need to provide the information as a URL, for example: https://120.121.123.124.

Creating a pipeline

Create a file called pipeline.yml.

The examples in this guide use pipeline.yml, but you might create multiple pipelines over time. If there's a more sensible name for the pipeline you're working on, feel free to use that instead.

Start the file as shown here. This is YAML for "the start of the document." It's optional, but traditional:

---

Now you have a valid YAML pipeline file.

Getting fly

First, try to set your new YAML file as a pipeline with fly, the Concourse command-line Interface (CLI).

To check if you have fly installed:

fly -v

If it returns a version number, you're ready for the next steps. Skip ahead to Setting the pipeline

If it says something like -bash: fly: command not found, you need to get fly.

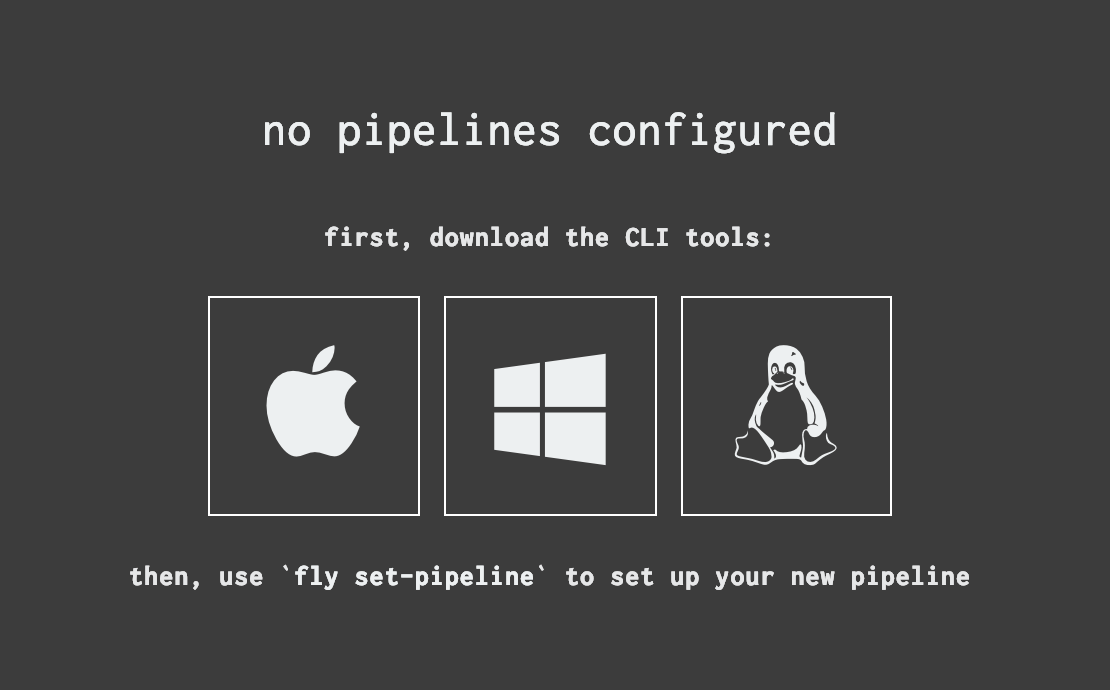

Navigate to the address for your Concourse instance in a web browser. At this point, you don't need to be signed in. If there are no public pipelines, you should see something like this:

If there are public pipelines, or if you're signed in and there are pipelines you can see, you'll see something similar in the lower-right hand corner.

Click the icon for your OS and save the file, move (mv) the resulting file to somewhere in your $PATH, and use chmod to make it executable:

About command-line examples: In some cases, you can copy-paste the examples directly into your terminal. Some of them won't work that way, or even if they did, would require you to edit them to replace our example values with your actual values. Best practice is to type all of the bash examples by hand, substituting values, if necessary, as you go. Don't forget that you can often hit the tab key to auto-complete the names of files that already exist; it makes all that typing just a little easier, and serves as a sort of command-line autocorrect.

Type the following into your terminal to get fly.

mv ~/Downloads/fly /usr/local/bin/fly

chmod +x !$

This means that you downloaded the fly binary, and moved it into the bash PATH, which is where bash looks for things to execute when you type a command. Then you added permissions that allow it to be executed (+x). Now, the CLI is installed, you won't have to do it again, because fly has the ability to update itself, (which is be described in more detail is a later section).

Setting the pipeline

Now set your pipeline with fly, the Concourse CLI.

fly keeps a list of Concourses it knows how to talk to. To find out if the Concourse you need is already on the list, type:

fly targets

If you see the address of the Concourse you want to use in the list, note its name, and use it in the login command. The examples in this book use the Concourse name control-plane.

fly -t control-plane login

If you don't see the Concourse you need, you can add it with the -c (--concourse-url)flag:

fly -t control-plane login -c https://your-concourse.example.com

You should see a login link you can click to complete login from your browser.

The -t flag sets the name when used with login and -c. In the future, you can leave out the -c argument.

If you ever want to know what a short flag stands for, you can run the command with -h (--help) at the end.

Time to set the pipeline. The example here use the name "foundation" for this pipeline, but if your foundation has a name, use that instead.

fly -t control-plane set-pipeline -p foundation -c pipeline.yml

It should say no changes to apply, which is expected, since the pipeline.yml file is still empty.

If fly says something about a "version discrepancy," "significant" or otherwise, run fly sync and try again. fly sync automatically updates the CLI with the version that matches the Concourse you're targeting.

Your first job

Before running your pipeline the first time, turn your directory into a git repository.

This allows to reverting edits to your pipeline as needed. This is one of many reasons you should keep your pipeline under version control.

But first, git init

This section describes a step-by-step approach for getting set up with git.

For an example of the repository file structure for single and multiple foundation systems, see Why use Git and GitHub?

Run

git init.gitshould come back with information about the commit you just created:git init git commit --allow-empty -m "Empty initial commit"If this gives you a config error instead, you might need to configure

gitfirst. See First-Time Git Setup to complete the initial setup. When you have finished going through the steps in this guide, try again.Now add your

pipeline.yml.git add pipeline.yml git commit -m "Add pipeline"If you are performing an upgrade, use this instead:

git add pipeline.yml vars.yml git commit -m "Add pipeline and starter vars"Check that everything is tidy:

git statusgitshould returnnothing to commit, working tree clean.

When this is done, you can safely make changes.

git commits are the basic unit of code history. Making frequent, small, commits with good commit messages makes it much easier to figure out why things are the way they are, and to return to the way things were in simpler, better times. Writing short commit messages that capture the intent of the change really does make the pipeline history much more legible, both to future-you, and to current and future teammates and collaborators.

The test task

Platform Automation Toolkit comes with a test task you can use to validate that it's been installed correctly.

Add the following to your

pipeline.yml, starting on the line after the---:jobs: - name: test plan: - task: test image: platform-automation-image file: platform-automation-tasks/tasks/test.ymlTry to set the pipeline now.

fly -t control-plane set-pipeline -p foundation -c pipeline.ymlNow you should be able to see your pipeline in the Concourse UI. It starts in the paused state, so click the play button to unpause it. Then click in to the gray box for the

testjob, and click the plus (+) button to schedule a build.It should return an error immediately, with

unknown artifact source: platform-automation-tasks. This is because there isn't a source for the task file yet.This preparation has resulted in a pipeline code that Concourse accepts.

Before starting the next step, make a commit:

git add pipeline.yml git commit -m "Add (nonfunctional) test task"Get the inputs you need by adding

getsteps to the plan before the task, as shown here:jobs: - name: test plan: - get: platform-automation-image resource: platform-automation params: globs: ["*image*.tgz"] unpack: true - get: platform-automation-tasks resource: platform-automation params: globs: ["*tasks*.zip"] unpack: true - task: test image: platform-automation-image file: platform-automation-tasks/tasks/test.ymlThere is a smaller vSphere container image available. To use it instead of the general purpose image, you can use this glob to get the image:

- get: platform-automation-image resource: platform-automation params: globs: ["vsphere-platform-automation-image*.tar.gz"] unpack: trueNext, you might try to

fly setthis new pipeline. At this stage, you will see that it is not ready yet, andflywill return a message about invalid resources.This is because you need to make the

imageandfileavailable, so you need to set up some Resources.

Adding resources

Resources are Concourse's main approach to managing artifacts. You need an image and the tasks directory, so you need to tell Concourse how to get these things by declaring Resources for them.

In this case, you will download the image and the tasks directory from the Broadcom Support portal. Before you can declare the resources themselves, you must teach Concourse to talk to the Broadcom Support portal. (Many resource types are built in, but this one isn't.)

Add the following to your pipeline file, above the

jobsentry.resource_types: - name: pivnet type: docker-image source: repository: pivotalcf/pivnet-resource tag: latest-final resources: - name: platform-automation type: pivnet source: product_slug: platform-automation api_token: ((pivnet-refresh-token))The API token is a credential, which you pass in using the command-line when setting the pipeline, You don't want to accidentally check it in.

Bash commands that start with a space character are not saved in your history. This can be very useful for cases like this, where you want to pass a secret, but you don't want it saved. Commands in this guide that contain a secret start with a space, which can be easy to miss.

Get a refresh token from your Broadcom Support profile (when logged in, click your user name, then Edit Profile) and click Request New Refresh Token.) Then use that token in the following command:

# note the space before the command fly -t control-plane set-pipeline \ -p foundation \ -c pipeline.yml \ -v pivnet-refresh-token=your-api-tokenWhen you get your Broadcom Support token as described above, any previous Broadcom Support tokens you have stop working. If you're using your Broadcom Support refresh token anywhere, retrieve it from your existing secret storage rather than getting a new one, or you'll end up needing to update it everywhere it's used.

Go back to the Concourse UI and trigger another build. This time, it should pass.

Now it's time to commit.

git add pipeline.yml git commit -m "Add resources needed for test task"It's better not to pass the Broadcom Support token every time you need to set the pipeline. Fortunately, Concourse can integrate with secret storage services, like CredHub. In this step, put the API token in CredHub so Concourse can get it.

Backslashes in bash examples: The following example has been broken across multiple lines by using backslash characters (

\) to escape the newlines. The backslash is used in here to keep the examples readable. When you're typing these out, you can skip the backslashes and put it all on one line.First, log in. Again, note the space at the start.

# note the starting space credhub login --server example.com \ --client-name your-client-id \ --client-secret your-client-secretDepending on your credential type, you may need to pass

client-idandclient-secret, as we do above, orusernameandpassword. We use theclientapproach because that's the credential type that automation should usually be working with. Nominally, a username represents a person, and a client represents a system; this isn't always exactly how things are in practice. Use whichever type of credential you have in your case. Note that if you exclude either set of flags, CredHub will interactively prompt forusernameandpassword, and hide the characters of your password when you type them. This method of entry can be better in some situations.Next, set the credential name to the path where Concourse will look for it:

# note the starting space credhub set \ --name /concourse/your-team-name/pivnet-refresh-token \ --type value \ --value your-credhub-refresh-tokenNow, set the pipeline again, without passing a secret this time.

fly -t control-plane set-pipeline \ -p foundation \ -c pipeline.ymlThis should succeed, and the diff Concourse shows you should replace the literal credential with

((pivnet-refresh-token)).Go to the UI again and re-run the test job; this should also succeed.

Exporting the installation

Before upgrading Tanzu Operations Manager, you must first download and persist an export of the current installation.

VMware strongly recommends automatically exporting the Tanzu Operations Manager installation and persisting it to your blobstore on a regular basis. This ensures that if you need to upgrade (or restore) your Tanzu Operations Manager for any reason, you'll have the latest installation info available. A time trigger is added later in this tutorial to help with this.

First, switch out the test job for one that downloads and installs Tanzu Operations Manager. Do this by changing:

- the

nameof the job - the

nameof the task - the

fileof the task

The first task in the job should be

download-product. It has an additional required input; theconfigfiledownload-productuses to talk to Tanzu Network.- the

Before writing that file and making it available as a resource,

getit (and reference it in the params) as if it's there.It also has an additional output (the downloaded image). It will be used in a subsequent step, so you don't have to

putit anywhere.Finally, while it's fine for

testto run in parallel, the install process shouldn't, so you also need to addserial: trueto the job.jobs: - name: export-installation serial: true plan: - get: platform-automation-image resource: platform-automation params: globs: ["*image*.tgz"] unpack: true - get: platform-automation-tasks resource: platform-automation params: globs: ["*tasks*.zip"] unpack: true - get: env - task: export-installation image: platform-automation-image file: platform-automation-tasks/tasks/export-installation.yml - put: installation params: file: installation/installation-*.zip- If you try to

flythis up to Concourse, it will again throw errors about resources that don't exist, so the next step is to make them. The first new resource you need is the config file. - Push your git repo to a remote on GitHub to make this (and later, other) configuration available to the pipelines. GitHub has good instructions you can follow to create a new repository on GitHub. You can skip over the part about using

git initto set up your repo, since you did that earlier.

- If you try to

Now set up your remote and use

git pushto make it available. You will use this repository to hold our single foundation specific configuration. These instructions use the "Single repository for each Foundation" pattern to structure the configurations.Add the repository URL to CredHub so that you can reference it later when you declare the corresponding resource.

pipeline-repo: [email protected]:username/your-repo-nameWrite an

env.ymlfor your Tanzu Operations Manager.env.ymlholds authentication and target information for a particular Tanzu Operations Manager.An example

env.ymlfor username/password authentication is shown below with the required properties. Please reference Configuring Env for the entire list of properties that can be used withenv.ymlas well as an example of anenv.ymlthat can be used with UAA (SAML, LDAP, etc.) authentication.The property

decryption-passphraseis required forimport-installation, and therefore required forupgrade-opsman.If your foundation uses authentication other than basic auth, see Inputs and Outputs for more detail on UAA-based authentication.

target: ((opsman-url)) username: ((opsman-username)) password: ((opsman-password)) decryption-passphrase: ((opsman-decryption-passphrase))Add and commit the new

env.ymlfile:git add env.yml git commit -m "Add environment file for foundation" git pushNow that the env file is in your git remote, you can add a resource to tell Concourse how to get it as

env.Since this is (probably) a private repo, you must create a deploy key Concourse can use to access it. Follow the GitHub instructions for creating a deploy key.

Place the private key in CredHub so you can use it in your pipeline:

# note the starting space credhub set \ --name /concourse/your-team-name/plat-auto-pipes-deploy-key \ --type ssh \ --private the/filepath/of/the/key-id_rsa \ --public the/filepath/of/the/key-id_rsa.pubAdd this to the resources section of your pipeline file:

- name: env type: git source: uri: ((pipeline-repo)) private_key: ((plat-auto-pipes-deploy-key.private_key)) branch: mainPut the credentials in CredHub:

# note the starting space throughout credhub set \ -n /concourse/your-team-name/foundation/opsman-username \ -t value -v your-opsman-username credhub set \ -n /concourse/your-team-name/foundation/opsman-password \ -t value -v your-opsman-password credhub set \ -n /concourse/your-team-name/foundation/opsman-decryption-passphrase \ -t value -v your-opsman-decryption-passphraseNotice the additional element to the cred paths; the foundation name.

If you look at Concourse lookup rules, you'll see that it searches the pipeline-specific path before the team path. Since our pipeline is named for the foundation it's used to manage, we can use this to scope access to our foundation-specific information to just this pipeline.

By contrast, the Tanzu Network token may be valuable across several pipelines (and associated foundations), so we scoped that to our team.To perform interpolation in one of your input files, you need the

credhub-interpolatetask Earlier, you relied on Concourse's native integration with CredHub for interpolation. That worked because you needed to use the variable in the pipeline itself, not in one of your inputs.You can add it to your job after retrieving your

envinput, but before theexport-installationtask:jobs: - name: export-installation serial: true plan: - get: platform-automation-image resource: platform-automation params: globs: ["*image*.tgz"] unpack: true - get: platform-automation-tasks resource: platform-automation params: globs: ["*tasks*.zip"] unpack: true - get: env - task: credhub-interpolate image: platform-automation-image file: platform-automation-tasks/tasks/credhub-interpolate.yml params: CREDHUB_CLIENT: ((credhub-client)) CREDHUB_SECRET: ((credhub-secret)) CREDHUB_SERVER: https://your-credhub.example.com PREFIX: /concourse/your-team-name/foundation input_mapping: files: env output_mapping: interpolated-files: interpolated-env - task: export-installation image: platform-automation-image file: platform-automation-tasks/tasks/export-installation.yml input_mapping: env: interpolated-env - put: installation params: file: installation/installation-*.zipThe

credhub-interpolatetask for this job maps the output from the task (interpolated-files) tointerpolated-env. This can be used by the next task in the job to more explicitly define the inputs/outputs of each task. It is also okay to leave the output asinterpolated-filesif it is appropriately referenced in the next task.Notice the input mappings of the

credhub-interpolateandexport-installationtasks. This allows you to use the output of one task as in input of another.An alternative to

input_mappingsis discussed in Configuration Management Strategies.Put your

credhub_clientandcredhub_secretinto CredHub, so Concourse's native integration can retrieve them and pass them as configuration to thecredhub-interpolatetask.# note the starting space throughout credhub set \ -n /concourse/your-team-name/credhub-client \ -t value -v your-credhub-client credhub set \ -n /concourse/your-team-name/credhub-secret \ -t value -v your-credhub-secretNow, the

credhub-interpolatetask will interpolate our config input, and pass it toexport-installationasconfig.The other new resource you need is a blobstore, so you can persist the exported installation.

Add an S3 resource to the

resourcessection:- name: installation type: s3 source: access_key_id: ((s3-access-key-id)) secret_access_key: ((s3-secret-key)) bucket: ((platform-automation-bucket)) regexp: installation-(.*).zipSave the credentials in CredHub:

# note the starting space throughout credhub set \ -n /concourse/your-team-name/s3-access-key-id \ -t value -v your-bucket-s3-access-key-id credhub set \ -n /concourse/your-team-name/s3-secret-key \ -t value -v your-s3-secret-keyThis time (and in the future), when you set the pipeline with

fly, you need to load vars fromvars.yml.# note the space before the command fly -t control-plane set-pipeline \ -p foundation \ -c pipeline.yml \ -l vars.ymlManually trigger a build. This time, it should pass.

You'll be using this, the ultimate form of the

flycommand to set your pipeline, for the rest of the tutorial.

You can save yourself some typing by using your bash history (if you did not prepend your command with a space). You can cycle through previous commands with the up and down arrows. Alternatively, Ctrl-r will search your bash history. Holding Ctrl-r, typefly, and you will see the last fly command you ran. Run it with enter, or instead of running it, use Ctrl-r again to see the matching command before that.This is a good commit point:

git add pipeline.yml vars.yml git commit -m "Export foundation installation in CI" git push

Performing the upgrade

Now that you have an exported installation, it's time to create another Concourse job to do the upgrade itself. The export and the upgrade need to be in separate jobs so they can be triggered (and re-run) independently.

This new job uses the upgrade-opsman task.

Write a new job that has

getsteps for your platform-automation resources and all the inputs you already know how to get:- name: upgrade-opsman serial: true plan: - get: platform-automation-image resource: platform-automation params: globs: ["*image*.tgz"] unpack: true - get: platform-automation-tasks resource: platform-automation params: globs: ["*tasks*.zip"] unpack: true - get: env - get: installationDo a commit here. The job doesn't do anything useful yet, but it's a good place to start.

git add pipeline.yml git commit -m "Set up initial gets for upgrade job" git pushWe recommend frequent, small commits that can be

flyset and, ideally, go green.

This one doesn't actually do anything though, right? Fair, but: setting and running the job gives you feedback on your syntax and variable usage. It can catch typos, resources you forgot to add or misnamed, and so on. Committing when you get to a working point helps keeps the diffs small, and the history tractable. Also, down the line, if you've got more than one pair working on a foundation, the small commits help you keep off one another's toes.

This workflow is not demonstrated here, but it can even be useful to make a commit, useflyto see if it works, and then push it if and only if it works. If it doesn't, you can usegit commit --amendonce you've figured out why and fixed it. This workflow makes it easy to keep what is set on Concourse and what is pushed to your source control remote in sync.You need the three required inputs for

upgrade-opsman.stateconfigimage

There are optional inputs, vars used with the config, and you can add those when you do

config.Start with the state file. Record the

iaasyour're using and the ID of the currently deployed Tanzu Operations Manager VM. Different IaaS uniquely identify VMs differently; here are examples for what this file should look like, depending on your IaaS:AWS

iaas: aws # Instance ID of the AWS VM vm_id: i-12345678987654321Azure

iaas: azure # Computer Name of the Azure VM vm_id: vm_nameGCP

iaas: gcp # Name of the VM in GCP vm_id: vm_nameOpenStack

iaas: openstack # Instance ID from the OpenStack Overview vm_id: 12345678-9876-5432-1abc-defghijklmnovSphere

iaas: vsphere # Path to the VM in vCenter vm_id: /datacenter/vm/folder/vm_nameChoose the IaaS you need for your IaaS, write it in your repo as

state.yml, commit it, and push it:git add state.yml git commit -m "Add state file for foundation Ops Manager" git pushYou can map the

envresource to theupgrade-opsmanstateinput after you add the task.But first, there are two more inputs to arrange for.

Option 1: Write a Tanzu Operations Manager VM Configuration file to

opsman.yml. The properties available vary by IaaS, but you can often inspect your existing Tanzu Operations Manager in your IaaS console (or, if your Tanzu Operations Manager was created with Terraform, look at your Terraform outputs) to find the necessary values.AWS

--- opsman-configuration: aws: region: us-west-2 vpc_subnet_id: subnet-0292bc845215c2cbf security_group_ids: [ sg-0354f804ba7c4bc41 ] key_pair_name: ops-manager-key # used to SSH to VM iam_instance_profile_name: env_ops_manager # At least one IP address (public or private) needs to be assigned to the # VM. It is also permissible to assign both. public_ip: 1.2.3.4 # Reserved Elastic IP private_ip: 10.0.0.2 # Optional # vm_name: ops-manager-vm # default - ops-manager-vm # boot_disk_size: 100 # default - 200 (GB) # instance_type: m5.large # default - m5.large # NOTE - not all regions support m5.large # assume_role: "arn:aws:iam::..." # necessary if a role is needed to authorize # the OpsMan VM instance profile # tags: {key: value} # key-value pair of tags assigned to the # # Ops Manager VM # Omit if using instance profiles # And instance profile OR access_key/secret_access_key is required # access_key_id: ((access-key-id)) # secret_access_key: ((secret-access-key)) # security_group_id: sg-123 # DEPRECATED - use security_group_ids # use_instance_profile: true # DEPRECATED - will use instance profile for # execution VM if access_key_id and # secret_access_key are not set # Optional Ops Manager UI Settings for upgrade-opsman # ssl-certificate: ... # pivotal-network-settings: ... # banner-settings: ... # syslog-settings: ... # rbac-settings: ...Azure

--- opsman-configuration: azure: tenant_id: 3e52862f-a01e-4b97-98d5-f31a409df682 subscription_id: 90f35f10-ea9e-4e80-aac4-d6778b995532 client_id: 5782deb6-9195-4827-83ae-a13fda90aa0d client_secret: ((opsman-client-secret)) location: westus resource_group: res-group storage_account: opsman # account name of container ssh_public_key: ssh-rsa AAAAB3NzaC1yc2EAZ... # ssh key to access VM # Note that there are several environment-specific details in this path # This path can reach out to other resource groups if necessary subnet_id: /subscriptions//resourceGroups/ /providers/Microsoft.Network/virtualNetworks/ /subnets/ # At least one IP address (public or private) needs to be assigned # to the VM. It is also permissible to assign both. private_ip: 10.0.0.3 public_ip: 1.2.3.4 # Optional # cloud_name: AzureCloud # default - AzureCloud # storage_key: ((storage-key)) # only required if your client does not # have the needed storage permissions # container: opsmanagerimage # storage account container name # default - opsmanagerimage # network_security_group: ops-manager-security-group # vm_name: ops-manager-vm # default - ops-manager-vm # boot_disk_size: 200 # default - 200 (GB) # use_managed_disk: true # this flag is only respected by the # create-vm and upgrade-opsman commands. # set to false if you want to create # the new opsman VM with an unmanaged # disk (not recommended). default - true # storage_sku: Premium_LRS # this sets the SKU of the storage account # for the disk # Allowed values: Standard_LRS, Premium_LRS, # StandardSSD_LRS, UltraSSD_LRS # vm_size: Standard_DS1_v2 # the size of the Ops Manager VM # default - Standard_DS2_v2 # Allowed values: https://docs.microsoft.com/en-us/azure/virtual-machines/linux/sizes-general # tags: Project=ECommerce # Space-separated tags: key[=value] [key[=value] ...]. Use '' to # clear existing tags. # vpc_subnet: /subscriptions/... # DEPRECATED - use subnet_id # use_unmanaged_disk: false # DEPRECATED - use use_managed_disk # Optional Ops Manager UI Settings for upgrade-opsman # ssl-certificate: ... # pivotal-network-settings: ... # banner-settings: ... # syslog-settings: ... # rbac-settings: ... GCP

--- opsman-configuration: gcp: # Either gcp_service_account_name or gcp_service_account json is required # You must remove whichever you don't use gcp_service_account_name: [email protected] gcp_service_account: ((gcp-service-account-key-json)) project: project-id region: us-central1 zone: us-central1-b vpc_subnet: infrastructure-subnet # At least one IP address (public or private) needs to be assigned to the # VM. It is also permissible to assign both. public_ip: 1.2.3.4 private_ip: 10.0.0.2 ssh_public_key: ssh-rsa some-public-key... # RECOMMENDED, but not required tags: ops-manager # RECOMMENDED, but not required # Optional # vm_name: ops-manager-vm # default - ops-manager-vm # custom_cpu: 2 # default - 2 # custom_memory: 8 # default - 8 # boot_disk_size: 100 # default - 100 # scopes: ["my-scope"] # hostname: custom.hostname # info: https://cloud.google.com/compute/docs/instances/custom-hostname-vm # Optional Ops Manager UI Settings for upgrade-opsman # ssl-certificate: ... # pivotal-network-settings: ... # banner-settings: ... # syslog-settings: ... # rbac-settings: ...Openstack

--- opsman-configuration: openstack: project_name: project auth_url: http://os.example.com:5000/v2.0 username: ((opsman-openstack-username)) password: ((opsman-openstack-password)) net_id: 26a13112-b6c2-11e8-96f8-529269fb1459 security_group_name: opsman-sec-group key_pair_name: opsman-keypair # At least one IP address (public or private) needs to be assigned to the VM. public_ip: 1.2.3.4 # must be an already allocated floating IP private_ip: 10.0.0.3 # Optional # availability_zone: zone-01 # project_domain_name: default # user_domain_name: default # vm_name: ops-manager-vm # default - ops-manager-vm # flavor: m1.xlarge # default - m1.xlarge # identity_api_version: 2 # default - 3 # insecure: true # default - false # Optional Ops Manager UI Settings for upgrade-opsman # ssl-certificate: ... # pivotal-network-settings: ... # banner-settings: ... # syslog-settings: ... # rbac-settings: ...vSphere

--- opsman-configuration: vsphere: vcenter: ca_cert: cert # REQUIRED if insecure = 0 (secure) datacenter: example-dc datastore: example-ds-1 folder: /example-dc/vm/Folder # RECOMMENDED, but not required url: vcenter.example.com username: ((vcenter-username)) password: ((vcenter-password)) resource_pool: /example-dc/host/example-cluster/Resources/example-pool # resource_pool can use a cluster - /example-dc/host/example-cluster # Optional # host: host # DEPRECATED - Platform Automation cannot guarantee # the location of the VM, given the nature of vSphere # insecure: 0 # default - 0 (secure) | 1 (insecure) disk_type: thin # thin|thick dns: 8.8.8.8 gateway: 192.168.10.1 hostname: ops-manager.example.com netmask: 255.255.255.192 network: example-virtual-network ntp: ntp.ubuntu.com private_ip: 10.0.0.10 ssh_public_key: ssh-rsa ...... # REQUIRED Ops Manager >= 2.6 # Optional # cpu: 1 # default - 1 # memory: 8 # default - 8 (GB) # ssh_password: ((ssh-password)) # REQUIRED if ssh_public_key not defined # (Ops Manager < 2.6 ONLY) # vm_name: ops-manager-vm # default - ops-manager-vm # disk_size: 200 # default - 160 (GB), only larger values allowed # Optional Ops Manager UI Settings for upgrade-opsman # ssl-certificate: ... # pivotal-network-settings: ... # banner-settings: ... # syslog-settings: ... # rbac-settings: ...Option 2: Alternatively, you can auto-generate your opsman.yml using a

p-automatorcommand to output an opsman.yml file in the directory it is called from.AWS

docker run -it --rm -v $PWD:/workspace -w /workspace platform-automation-image \ p-automator export-opsman-config \ --state-file generated-state/state.yml \ --config-file opsman.yml \ --aws-region "$AWS_REGION" \ --aws-secret-access-key "$AWS_SECRET_ACCESS_KEY" \ --aws-access-key-id "$AWS_ACCESS_KEY_ID"Azure

docker run -it --rm -v $PWD:/workspace -w /workspace platform-automation-image \ p-automator export-opsman-config \ --state-file generated-state/state.yml \ --config-file opsman.yml \ --azure-subscription-id "$AZURE_SUBSCRIPTION_ID" \ --azure-tenant-id "$AZURE_TENANT_ID" \ --azure-client-id "$AZURE_CLIENT_ID" \ --azure-client-secret "$AZURE_CLIENT_SECRET" \ --azure-resource-group "$AZURE_RESOURCE_GROUP"GCP

docker run -it --rm -v $PWD:/workspace -w /workspace platform-automation-image \ p-automator export-opsman-config \ --state-file generated-state/state.yml \ --config-file opsman.yml \ --gcp-zone "$GCP_ZONE" \ --gcp-service-account-json <(echo "$GCP_SERVICE_ACCOUNT_JSON") \ --gcp-project-id "$GCP_PROJECT_ID"vSphere

docker run -it --rm -v $PWD:/workspace -w /workspace platform-automation-image \ p-automator export-opsman-config \ --state-file generated-state/state.yml \ --config-file opsman.yml \ --vsphere-url "$VCENTER_URL" \ --vsphere-username "$VCENTER_USERNAME" \ --vsphere-password "$VCENTER_PASSWORD"Once you have your config file, commit and push it:

git add opsman.yml git commit -m "Add opsman config" git pushGet the image for the new Tanzu Operations Manager version using the

download-producttask. It requires a config file to specify which Tanzu Operations Manager to get, and to provide Tanzu Network credentials. Name this filedownload-opsman.yml:--- pivnet-api-token: ((pivnet-refresh-token)) # interpolated from CredHub pivnet-file-glob: "ops-manager*.ova" pivnet-product-slug: ops-manager product-version-regex: ^2\.5\.0.*$git add download-opsman.yml git commit -m "Add download opsman config" git pushNow put it all together using the following:

- name: upgrade-opsman serial: true plan: - get: platform-automation-image resource: platform-automation params: globs: ["*image*.tgz"] unpack: true - get: platform-automation-tasks resource: platform-automation params: globs: ["*tasks*.zip"] unpack: true - get: env - get: installation - task: credhub-interpolate image: platform-automation-image file: platform-automation-tasks/tasks/credhub-interpolate.yml params: CREDHUB_CLIENT: ((credhub-client)) CREDHUB_SECRET: ((credhub-secret)) CREDHUB_SERVER: ((credhub-server)) PREFIX: /concourse/your-team-name/foundation input_mapping: files: env output_mapping: interpolated-files: interpolated-configs - task: download-opsman-image image: platform-automation-image file: platform-automation-tasks/tasks/download-product.yml params: CONFIG_FILE: download-opsman.yml input_mapping: config: interpolated-configs - task: upgrade-opsman image: platform-automation-image file: platform-automation-tasks/tasks/upgrade-opsman.yml input_mapping: config: interpolated-configs image: downloaded-product secrets: interpolated-configs state: envWe do not explicitly set the default parameters for

upgrade-opsmanin this example. Becauseopsman.ymlis the default input toOPSMAN_CONFIG_FILE,env.ymlis the default input toENV_FILE, andstate.ymlis the default input toSTATE_FILE, it is redundant to set this param in the pipeline. See the task definitions for a full range of the available and default parameters.Set the pipeline.

Before running the job,

ensurethatstate.ymlis always persisted regardless of whether theupgrade-opsmanjob failed or passed. Add the following section to the job:- name: upgrade-opsman serial: true plan: - get: platform-automation-image resource: platform-automation params: globs: ["*image*.tgz"] unpack: true - get: platform-automation-tasks resource: platform-automation params: globs: ["*tasks*.zip"] unpack: true - get: env - get: installation - task: credhub-interpolate image: platform-automation-image file: platform-automation-tasks/tasks/credhub-interpolate.yml params: CREDHUB_CLIENT: ((credhub-client)) CREDHUB_SECRET: ((credhub-secret)) CREDHUB_SERVER: ((credhub-server)) PREFIX: /concourse/your-team-name/foundation input_mapping: files: env output_mapping: interpolated-files: interpolated-configs - task: download-opsman-image image: platform-automation-image file: platform-automation-tasks/tasks/download-product.yml params: CONFIG_FILE: download-opsman.yml input_mapping: config: interpolated-configs - task: upgrade-opsman image: platform-automation-image file: platform-automation-tasks/tasks/upgrade-opsman.yml input_mapping: config: interpolated-configs image: downloaded-product secrets: interpolated-configs state: env ensure: do: - task: make-commit image: platform-automation-image file: platform-automation-tasks/tasks/make-git-commit.yml input_mapping: repository: env file-source: generated-state output_mapping: repository-commit: env-commit params: FILE_SOURCE_PATH: state.yml FILE_DESTINATION_PATH: state.yml GIT_AUTHOR_EMAIL: "[email protected]" GIT_AUTHOR_NAME: "CI User" COMMIT_MESSAGE: 'Update state file' - put: env params: repository: env-commit merge: trueSet the pipeline one final time, run the job, and see it pass.

git add pipeline.yml git commit -m "Upgrade Ops Manager in CI" git push

Your upgrade pipeline is now complete.