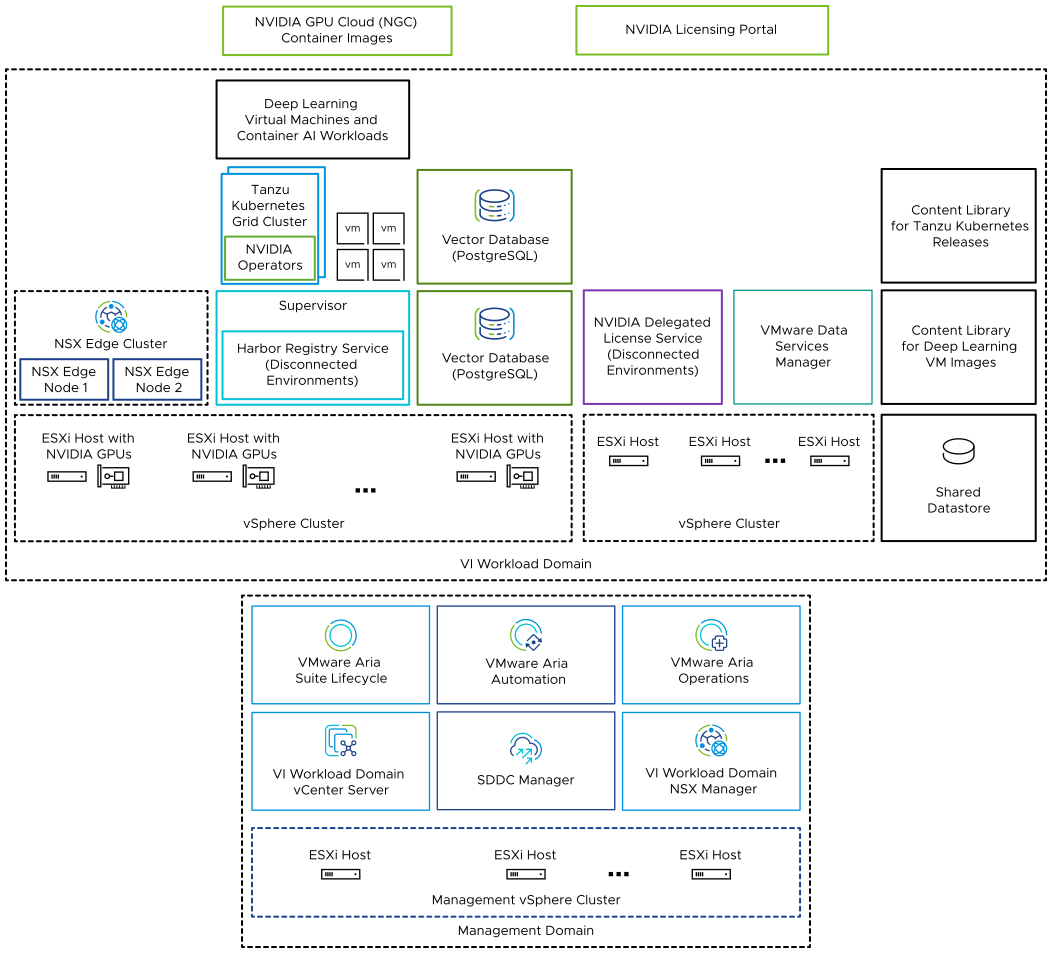

As a multi-component solution, you can use VMware Private AI Foundation with NVIDIA to run generative AI workloads by using accelerated computing from NVIDIA, and virtual infrastructure management and cloud management from VMware Cloud Foundation.

VMware Private AI Foundation with NVIDIA provides a platform for provisioning AI workloads on ESXi hosts with NVIDIA GPUs. In addition, running AI workloads based on NVIDIA GPU Cloud (NGC) containers is specifically validated by VMware.

VMware Private AI Foundation with NVIDIA supports two use cases:

- Development use case

- Cloud administrators and DevOps engineers can provision AI workloads, including Retrieval-Augmented Generation (RAG), in the form of deep learning virtual machines. Data scientists can use these deep learning virtual machines for AI development.

- Production use case

- Cloud administrators can provide DevOps engineers with a VMware Private AI Foundation with NVIDIA environment for provisioning production-ready AI workloads on Tanzu Kubernetes Grid (TKG) clusters on vSphere with Tanzu.

Licensing

You need the VMware Private AI Foundation with NVIDIA add-on license to access the following functionality:

- Private AI setup in VMware Aria Automation for catalog items for easy provisioning of GPU-accelerated deep learning virtual machines and TKG clusters.

- Provisioning of PostgreSQL databases with the pgvector extension with enterprise support.

- Deploying and using the deep learning virtual machine image delivered by VMware by Broadcom.

You can deploy AI workloads with and without Supervisor enabled and use the GPU metrics in vCenter Server and VMware Aria Operations under the VMware Cloud Foundation license.

The NVIDIA software components are available for use under an NVIDIA AI Enterprise license.

What are the VMware Private AI Foundation with NVIDIA components?

| Component | Description |

|---|---|

| GPU-enabled ESXi hosts | ESXi hosts that configured in the following way:

|

| Supervisor | One or more vSphere clusters enabled for vSphere with Tanzu so that you can run virtual machines and containers on vSphere by using the Kubernetes API. A Supervisor is a Kubernetes cluster itself, serving as the control plane to manage workload clusters and virtual machines. |

| Harbor registry | A local image registry in a disconnected environment where you host the container images downloaded from the NVIDIA NGC catalog. |

| NSX Edge cluster | A cluster of NSX Edge nodes that provides 2-tier north-south routing for the Supervisor and the workloads it runs. The Tier-0 gateway on the NSX Edge cluster is in active-active mode. |

| NVIDIA Operators |

|

| Vector database | A PostgreSQL database that has the pgvector extension enabled so that you can use it in Retrieval Augmented Generation (RAG) AI workloads. |

|

You use the NVIDIA Licensing Portal to generate a client configuration token to assign a license to the guest vGPU driver in the deep learning virtual machine and the GPU Operators on TKG clusters. In a disconnected environment or to have your workloads getting license information without using an Internet connection, you host the NVIDIA licenses locally on a Delegated License Service (DLS) appliance. |

| Content library | Content libraries store the images for the deep learning virtual machines and for the Tanzu Kubernetes releases. You use these images for AI workload deployment within the VMware Private AI Foundation with NVIDIA environment. In a connected environment, content libraries pull their content from VMware managed public content libraries. In a disconnected environment, you must upload the required images manually or pull them from an internal content library mirror server. |

| NVIDIA GPU Cloud (NGC) catalog | A portal for GPU-optimized containers for AI, and machine learning that are tested and ready to run on supported NVIDIA GPUs on premises on top of VMware Private AI Foundation with NVIDIA. |

As a cloud administrator, you use the management components in VMware Cloud Foundation

| Management Component | Description |

|---|---|

| SDDC Manager | You use SDDC Manager for the following tasks:

|

| VI Workload Domain vCenter Server | You use this vCenter Server instance to enable and configure a Supervisor. |

| VI Workload Domain NSX Manager | SDDC Manager uses this NSX Manager to deploy and update NSX Edge clusters. |

| VMware Aria Suite Lifecycle | You use VMware Aria Suite Lifecycle to deploy and update VMware Aria Automation and VMware Aria Operations. |

| VMware Aria Automation | You use VMware Aria Automation to add self-service catalog items for deploying AI workloads for DevOps engineers and data scientists. |

| VMware Aria Operations | You use VMware Aria Operations for monitoring the GPU consumption in the GPU-enabled workload domains. |

| VMware Data Services Manager | You use VMware Data Services Manager to create vector databases, such as a PostgreSQL database with pgvector extension. |