This topic describes how VMware Tanzu Kubernetes Grid Integrated Edition (TKGI) deploys and manages Kubernetes clusters.

Tanzu Kubernetes Grid Integrated Edition Overview

A Tanzu Kubernetes Grid Integrated Edition environment consists of a TKGI Control Plane and one or more workload clusters.

Tanzu Kubernetes Grid Integrated Edition administrators use the TKGI Control Plane to deploy and manage Kubernetes clusters. The workload clusters run the apps pushed by developers.

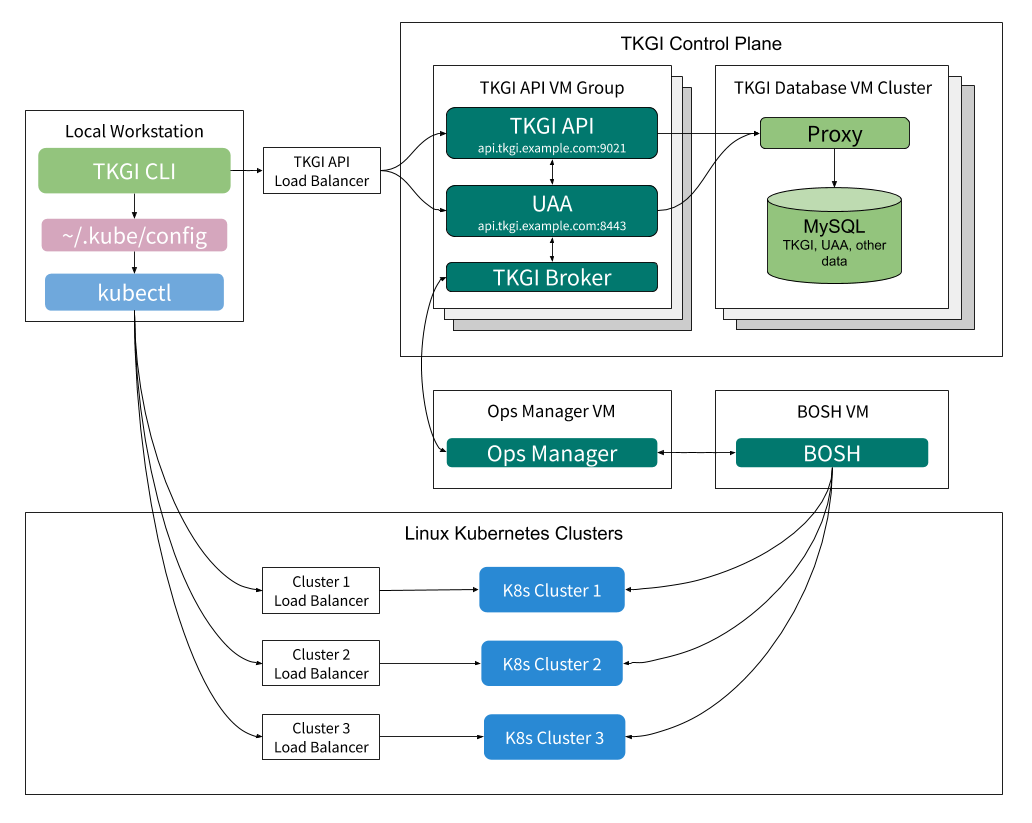

The following illustrates the interaction between Tanzu Kubernetes Grid Integrated Edition components:

Administrators access the TKGI Control Plane through the TKGI Command Line Interface (TKGI CLI) installed on their local workstations.

Within the TKGI Control Plane the TKGI API and TKGI Broker use BOSH to execute the requested cluster management functions. For information about the TKGI Control Plane, see TKGI Control Plane Overview below. For instructions on installing the TKGI CLI, see Installing the TKGI CLI.

Kubernetes deploys and manages workloads on Kubernetes clusters. Administrators use the Kubernetes CLI, kubectl, to direct Kubernetes from their local workstations. For information about kubectl, see Overview of kubectl in the Kubernetes documentation.

TKGI Control Plane Overview

The TKGI Control Plane manages the lifecycle of Kubernetes clusters deployed using Tanzu Kubernetes Grid Integrated Edition.

The control plane provides the following via the TKGI API:

- View cluster plans

- Create clusters

- View information about clusters

- Obtain credentials to deploy workloads to clusters

- Scale clusters

- Delete clusters

- Create and manage network profiles for VMware NSX-T

In addition, the TKGI Control Plane can upgrade all existing clusters using the Upgrade all clusters BOSH errand. For more information, see Upgrade Kubernetes Clusters in Upgrading Tanzu Kubernetes Grid Integrated Edition (Antrea and Flannel Networking).

TKGI Control Plane is hosted on a pair of VM groups:

- The TKGI API VM Group for hosting cluster management services.

- The TKGI Database VM Cluster to store cluster management data.

TKGI API VM Group

The instances in the TKGI API VM Group host the following services:

- User Account and Authentication (UAA)

- TKGI API

- TKGI Broker

- Billing and Telemetry

The following sections describe UAA, TKGI API, and TKGI Broker services, the primary services hosted on the TKGI API VM.

UAA

When a user logs in to or logs out of the TKGI API through the TKGI CLI, the TKGI CLI communicates with UAA to authenticate them. The TKGI API permits only authenticated users to manage Kubernetes clusters. For more information about authenticating, see TKGI API Authentication.

UAA must be configured with the appropriate users and user permissions. For more information, see Managing Tanzu Kubernetes Grid Integrated Edition Users with UAA.

TKGI API

Through the TKGI CLI, users instruct the TKGI API service to deploy, scale up, and delete Kubernetes clusters as well as show cluster details and plans. The TKGI API can also write Kubernetes cluster credentials to a local kubeconfig file, which enables users to connect to a cluster through kubectl.

On AWS, GCP, and vSphere without NSX-T deployments the TKGI CLI communicates with the TKGI API within the control plane via the TKGI API Load Balancer. On vSphere with NSX-T deployments the TKGI API host is accessible via a DNAT rule. For information about enabling the TKGI API on vSphere with NSX-T, see the Share the TKGI API Endpoint section in Installing Tanzu Kubernetes Grid Integrated Edition on vSphere with NSX-T Integration.

The TKGI API sends all cluster management requests, except read-only requests, to the TKGI Broker.

TKGI Broker

When the TKGI API receives a request to modify a Kubernetes cluster, it instructs the TKGI Broker to make the requested change.

The TKGI Broker consists of an On-Demand Service Broker and a Service Adapter. The TKGI Broker generates a BOSH manifest and instructs the BOSH Director to deploy or delete the Kubernetes cluster.

For Tanzu Kubernetes Grid Integrated Edition deployments on vSphere with NSX-T, there is an additional component, the Tanzu Kubernetes Grid Integrated Edition NSX-T Proxy Broker. The TKGI API communicates with the TKGI NSX-T Proxy Broker, which in turn communicates with the NSX Manager to provision the Node Networking resources. The TKGI NSX-T Proxy Broker then forwards the request to the On-Demand Service Broker to deploy the cluster.

TKGI Database VM Cluster

The instances in the TKGI Database VM Cluster host MySQL, proxy, and other data-related services. These data-related functions persist TKGI Control Plane data for the the following services:

- TKGI API

- UAA

- Billing

- Telemetry

High Availability Modes

Tanzu Kubernetes Grid Integrated Edition can be configured for TKGI Control Plane and workload high availability.

TKGI Control Plane High Availability Mode (Beta)

The TKGI Control Plane can be configured in either standard or high availability (beta) modes.

- In standard mode:

- The TKGI API is hosted on the

pivotal-container-serviceVM. - The TKGI Database is hosted on the

pks-dbVM.

- The TKGI API is hosted on the

- In high availability mode (beta):

- The TKGI API is hosted on multiple

pivotal-container-serviceVMs. - The TKGI Database is hosted on three

pks-dbVMs.Warning: High availability mode is a beta feature. Do not scale your TKGI API or TKGI Database to more than one instance in production environments.

- The TKGI API is hosted on multiple

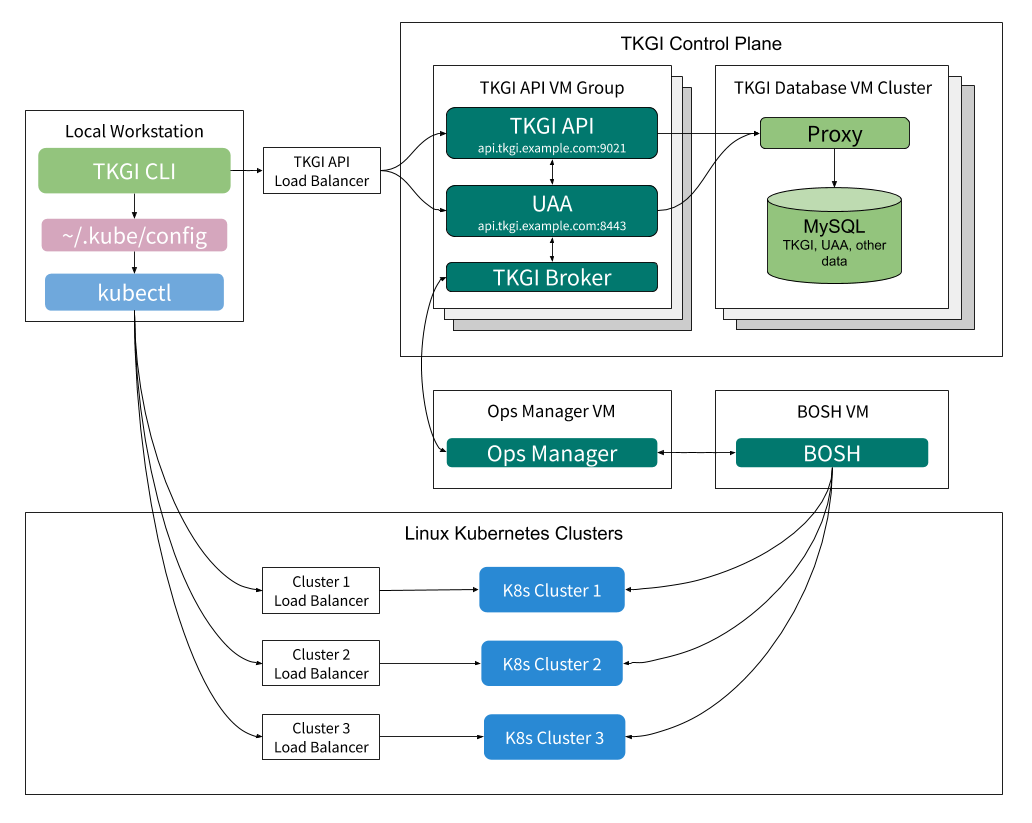

The following illustrates the interaction between Tanzu Kubernetes Grid Integrated Edition components in high availability mode:

You establish HA mode during the resource configuation phase of TKGI tile deployment. You can change the number of instances from 1 to 2 or 3 for the TKGI API, and from 1 to 3 for the TKGI Database. Once you set HA mode and increase the number of instances beyond 1, you cannot decrease the number of instances.

Windows Worker-Based Kubernetes Cluster High Availability

Windows worker-based cluster Linux nodes can be configured in either standard or high availability modes.

- In standard mode, a single control plane/etcd node and a single Linux worker manage a cluster’s Windows Kubernetes VMs.

- In high availability mode, multiple control plane/etcd and Linux worker nodes manage a cluster’s Windows Kubernetes VMs.

The following illustrates the interaction between the Tanzu Kubernetes Grid Integrated Edition Management Plane and Windows worker-based Kubernetes clusters:

To configure Tanzu Kubernetes Grid Integrated Edition Windows worker-based clusters for high availability, set these fields in the Plan pane as described in Plans in Configuring Windows Worker-Based Kubernetes Clusters:

- Enable HA Linux workers

- Master/ETCD Node Instances

- Worker Node Instances