You can configure Kafka Collector, to collect the data from the various data sources.

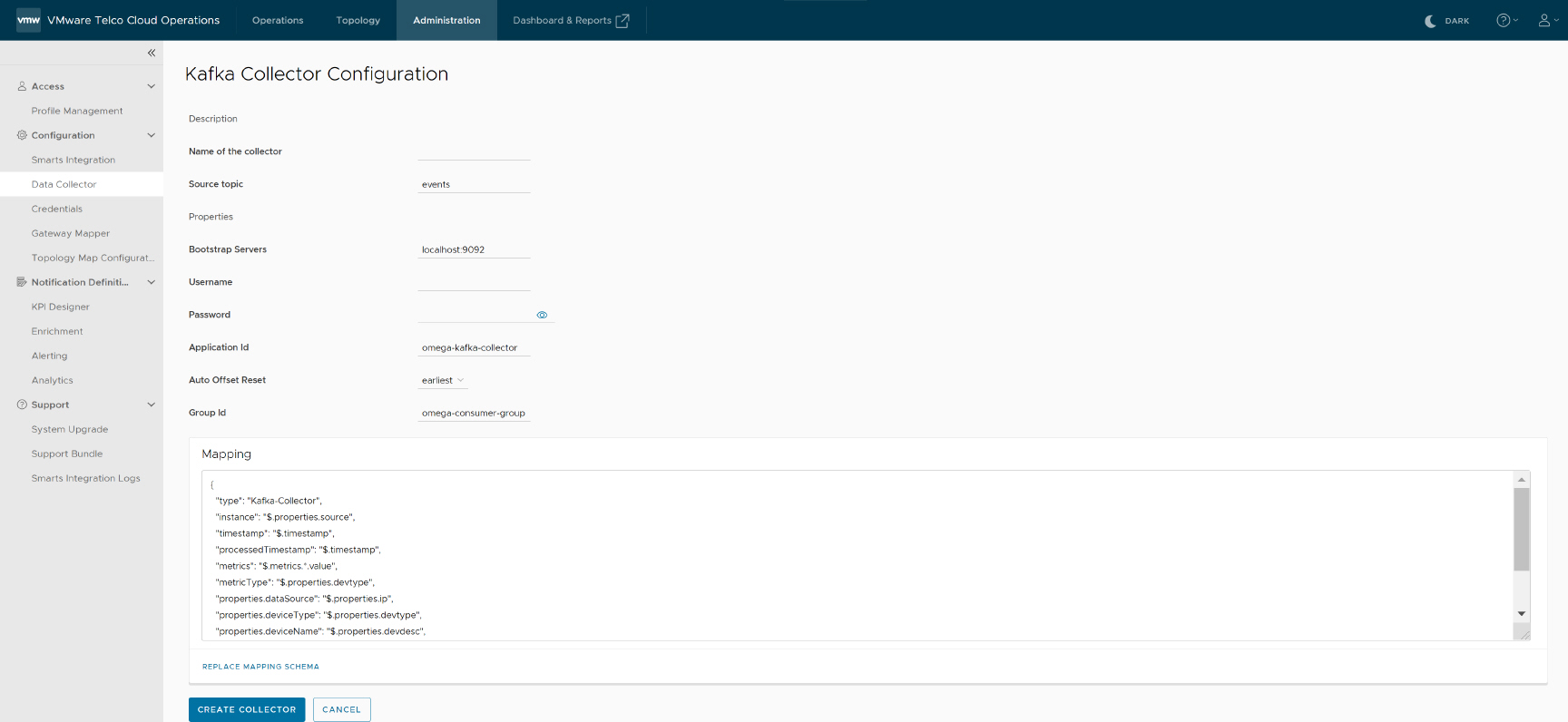

To configure the Kafka collector, navigate to Administration > Configuration > Data Collector > Collector Store, and select kafka-collector in the VMware Telco Cloud Operations UI.

You must provide the source Kafka topic where data come from, as well as the respective mapping that will be used to process incoming records.

| Input parameter | Description | Default Value |

|---|---|---|

| Name of the collector | Provide name of the collector | NA |

| Source topic | Provide source Kafka topic where data will come from. | NA |

| Bootstrap servers | Provide source Kafka broker detail. | NA |

| Username | Provide username credential of Kafka broker | NA |

| Password | Provide password credential of Kafka broker. | NA |

| Application Id | Provide an identifier for the stream processing application. Must be unique within the Kafka cluster. | |

| Auto Offset Reset | Possible values are:

|

latest |

| Group ID | Provide an unique string that identifies the Connect cluster group this worker belongs to. | |

| Mapping | Mapping source properties to the destination properties to comply with the VMware Telco Cloud Operations format using JSONPath expressions. Example:"type": "Kafka-Collector", "instance": "$.properties.source", "timestamp": "$.timestamp", "metricType": "$.properties.devtype", "properties.dataSource": "$.properties.ip", "properties.deviceType": "$.properties.devtype", "properties.deviceName": "$.properties.devdesc", "properties.entityType": "$.properties.type", "properties.entityName": "$.properties.table",

Note:

For data ingested through Kafka Mapper, timestamp values must be in the UTC timestamp ("YYYY-MM-DDTHH:mm:ss.sssZ") and long (ms since epoch) format. Failure to comply, results in messages not being ingested.

For example:

|

NA |