vSphere with Tanzu supports rolling updates for Supervisor Clusters and Tanzu Kubernetes Grid Service clusters, and for the infrastructure supporting these clusters.

How Supervisor Clusters and Tanzu Kubernetes Grid Service Clusters Are Updated

vSphere with Tanzu uses a rolling update model for Supervisor Clusters and Tanzu Kubernetes Grid Service clusters. The rolling update model ensures that there is minimal downtime for cluster workloads during the update process. Rolling updates include upgrading the Kubernetes software versions and the infrastructure and services supporting the Kubernetes clusters, such as virtual machine configurations and resources, vSphere services and namespaces, and custom resources.

Dependency Between Supervisor Cluster Updates and Tanzu Kubernetes Grid Service Cluster Updates

You update the Supervisor Cluster and the Tanzu Kubernetes Grid Service clusters separately. Note, however, that there are dependencies between the two.

Updating a Supervisor Cluster will likely trigger a rolling update of the Tanzu Kubernetes Grid Service clusters deployed there. See Update the Supervisor Cluster by Performing a vSphere Namespaces Update.

You may need to update one or more Tanzu Kubernetes Grid Service clusters before updating a Supervisor Cluster if the Tanzu Kubernetes Grid Service cluster is not compliant with the target Supervisor Cluster version. See Verify Tanzu Kubernetes Cluster Compatibility for Update.

About Supervisor Cluster Updates

When you initiate an update for a Supervisor Cluster, the system creates a new control plane node and joins it to the existing control plane. The vSphere inventory shows four control plane nodes during this phase of the update as the system adds a new updated node and then removes the older out-of-date node. Objects are migrated from one of the old control plane nodes to the new one, and the old control plane node is removed. This process repeats one-by-one until all control plane nodes are updated. Once the control plane is updated, the worker nodes are updated in a similar rolling update fashion. The worker nodes are the ESXi hosts, and each spherelet process on each ESXi host is updated one-by-one.

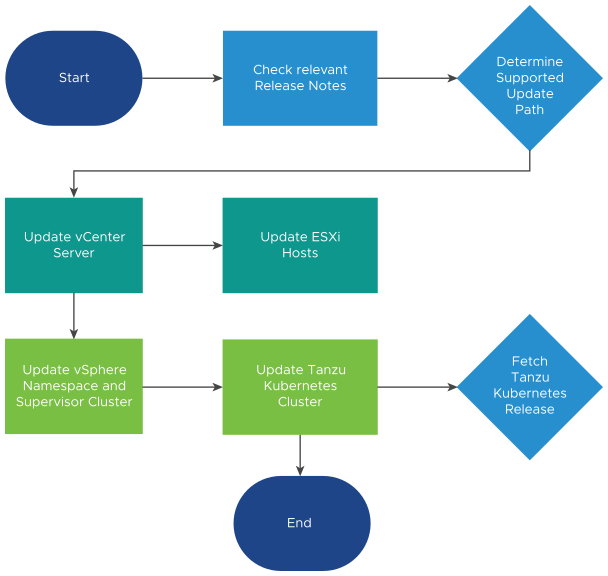

- Update the vSphere Namespaces.

- Update everything, including VMware versions and Kubernetes versions.

- Upgrade vCenter Server.

- Perform a vSphere Namespaces update (including Kubernetes upgrade).

To perform a vSphere Namespaces update, see Update the Supervisor Cluster by Performing a vSphere Namespaces Update.

- Check the VMware Interoperability matrix https://interopmatrix.vmware.com/Interoperability for the vCenter Server and NSX to determine compatibility. vSphere with Tanzu functionality is delivered by Workload Control Plane (WCP) software which ships with vCenter Server.

- Upgrade NSX, if compatible.

- Upgrade vCenter Server.

- Upgrade vSphere Distributed Switch.

- Upgrade ESXi hosts.

- Check compatibility of any provisioned Tanzu Kubernetes Grid Service clusters with the target Supervisor Cluster version.

- Update vSphere Namespaces (including the Supervisor Cluster Kubernetes version).

- Update Tanzu Kubernetes Grid Service clusters.

About Tanzu Kubernetes Grid Service Cluster Updates

When you update a Supervisor Cluster, the infrastructure components supporting the Tanzu Kubernetes Grid Service clusters deployed to that Supervisor Cluster, such as the Tanzu Kubernetes Grid Service, are likewise updated. Each infrastructure update can include updates for services supporting the Tanzu Kubernetes Grid Service (CNI, CSI, CPI), and updated configuration settings for the control plane and worker nodes that can be applied to existing Tanzu Kubernetes Grid Service clusters. To ensure that your configuration meets compatibility requirements, vSphere with Tanzu performs pre-checks during rolling update and enforces compliance.

To perform a rolling update of a Tanzu Kubernetes Grid Service cluster, you update the cluster manifest. See Update Tanzu Kubernetes Clusters. Note, however, that when a vSphere Namespaces update is performed, the system immediately propagates updated configurations to all Tanzu Kubernetes Grid Service clusters. These updates can automatically trigger a rolling update of the Tanzu Kubernetes Grid Service control plane and worker nodes.

The rolling update process for replacing the cluster nodes is similar to the rolling update of pods in a Kubernetes Deployment. There are two distinct controllers responsible for performing a rolling update of Tanzu Kubernetes Grid Service clusters: the Add-ons Controller and the TanzuKubernetesCluster controller. Within those two controllers there are three key stages to a rolling update: updating add-ons, updating the control plane, and updating the worker nodes. These stages occur in order, with pre-checks that prevent a step from beginning until the preceding step has sufficiently progressed. These steps might be skipped if they are determined to be unnecessary. For example, an update might only affect worker nodes and therefore not require any add-on or control plane updates.

Pods running on a Tanzu Kubernetes Grid Service cluster that are not governed by a replication controller will be deleted during a Kubernetes version upgrade as part of the worker node drain during the Tanzu Kubernetes Grid Service cluster update. This is true if the cluster update is triggered manually or automatically by a vSphere Namespaces update. Pods not governed by a replication controller include pods that are not created as part of a Deployment or ReplicaSet spec. Refer to the topic Pod Lifecycle: Pod lifetime in the Kubernetes documentation for more information.