Persistent Memory (PMem), also known as Non-Volatile Memory (NVM), is capable of maintaining data even after a power outage. PMem can be used by applications that are sensitive to downtime and require high performance.

VMs can be configured to use PMem on a standalone host, or in a cluster. PMem is treated as a local datastore. Persistent memory significantly reduces storage latency. In ESXi you can create VMs that are configured with PMem, and applications inside these VMs can take advantage of this increased speed. Once a VM is initially powered on, PMem is reserved for it regardless of whether it is powered on or off. This PMem stays reserved until the VM is migrated or removed.

Persistent memory can be consumed by virtual machines in two different modes. Legacy guest OSes can still take advantage of virtual persistent memory disk feature.

- Virtual Persistent Memory (vPMem)

Using vPMem, the memory is exposed to a guest OS as a virtual NVDIMM. This enables the guest OS to use PMem in byte addressable random mode.Note: You must use VM hardware version 14 and a guest OS that supports NVM technology.Note: You must use VM hardware version 19 when you configure vSphere HA for PMem VMs. For more information, see Configure vSphere HA for PMem VMs.

- Virtual Persistent Memory Disk (vPMemDisk)

Using vPMemDisk, the memory can be accessed by the guest OS as a virtual SCSI device, but the virtual disk is stored in a PMem datastore.

When you create a VM with PMem, memory is reserved for it at the time of Hard disk creation. Admission control is also done at the time of Hard disk creation. For more information, see vSphere HA Admission Control PMem Reservation.

In a cluster, each VM has some capacity for PMem. The total amount of PMem must not be greater than the total amount available in the cluster. The consumption of PMem includes both powered on and powered off VMs. If a VM is configured to use PMem and you do not use DRS, then you must manually pick a host that has sufficient PMem to place the VM on.

NVDIMM and traditional storage

NVDIMM is accessed as memory. When you use traditional storage, software exists between applications and storage devices which can cause a delay in processing time. When you use PMem, the applications use the storage directly. This means that PMem performance is better than traditional storage. Storage is local to the host. However, since system software cannot track the changes, solutions such as backups do not currently work with PMem.

Solutions such as vSphere HA have limited scope if vPMem is used in a mode that is not write-through to a non-PMem datastore. When vSphere HA is activated for vPMem VMs with failover enabled, the VM can be failed over to different host. When this happens, the VM is using the PMem resources on the new host. To free up the resources on the old host, a garbage collector periodically identifies and frees up these resources for use by other VMs.

Name spaces

Name spaces for PMem are configured before ESXi starts. Name spaces are similar to disks on the system. ESXi reads name spaces and combines multiple name spaces into one logical volume by writing GPT headers. This is formatted automatically by default, if you have not previously configured it. If it has already been formatted, ESXi attempts to mount the PMem.

GPT tables

If the data in PMem storage is corrupted it can cause ESXi to fail. To avoid this, ESXi checks for errors in the metadata during PMem mount time.

PMem regions

PMem regions are a continuous byte stream that represent a single vNVDimm or vPMemDisk. Each PMem volume belongs to a single host. This could be difficult to manage if an administrator has to manage each host in a cluster with a large number of hosts. However, you do not have to manage each individual datastore. Instead you can think of the entire PMem capacity in the cluster as one datastore.

VC and DRS automate initial placement of PMem datastores. Select a local PMem storage profile when the VM is created or when the device is added to the VM. The rest of the configuration is automated. One limitation is that ESXi does not allow you to put the VM home on a PMem datastore. This is because it takes up valuable space to store VM log and stat files. These regions are used to represent the VM data and can be exposed as byte addressable nvDimms, or VpMem disks.

Migration

Since PMem is a local datastore, if you want to move a VM you must use storage vMotion. A VM with vPMem can only be migrated to an ESX host with PMem resource. A VM with vPMemDisk can be migrated to an ESX host without a PMem resource.

Error handling and NVDimm management

Host failures can result in a loss of availability on vPMem VMs which are not in write-through mode. In the case of catastrophic errors, you may lose all data and must take manual steps to reformat the PMem.

vSphere Persistent Memory with the vSphere Client

For a brief conceptual introduction to Persistent Memory, see:

Enhancements to Working with PMEM in the vSphere Client

For a brief overview of enhancements in the HTML5-based vSphere Client when working with PMem, see:

Migrating and Cloning VMs Using PMEM in the vSphere Client

For a brief overview of migrating and cloning virtual machines that use PMem, see:

Configure vSphere HA for PMem VMs

You can configure vSphere HA for PMem VMs in write-through mode, so that when a host fails VMs can be restored on another functioning host.

Prerequisites

- You must select Hardware version 19.

- PMem VMs with vPMemDisks are not supported.

Procedure

- When creating a new VM in the New Virtual Machine wizard, select Customize hardware.

- Click ADD NEW DEVICE and select Add NVDIMM from the drop-down menu.

- Click the checkbox Allow failover on another host for all NVDIMM devices.

- Click NEXT and complete the New Virtual Machine wizard.

On Host failure, NVDIMM PMem data cannot be recovered. By default, HA will not attempt to restart this virtual machine on another host. Allowing HA on host failure to failover the virtual machine, will restart the virtual machine on another host with a new, empty NVDIMM.

- To enable HA on an existing VM, browse to the VM.

- Under VM Hardware, click EDIT.

- Select the NVDIMM.

- Click the checkbox Allow failover on another host for all NVDIMM devices.

- Click OK.

On host failure, HA will restart this virtual machine on another host with new, empty NVDIMMs.

vSphere HA Admission Control PMem Reservation

Admission control is a policy used by vSphere HA to ensure failover capacity within a cluster.

Raising the number of potential host failures to tolerate will increase the availability restraints and capacity reserved. You can reserve a percentage of Persistent Memory for Host failover capacity. This is actual storage capacity that is blocked and must be considered for host power off.

Under Edit Cluster Settings you can select Admission Control to specify the number of failures the host will tolerate.

If you select CPU/Memory reservation defined by:

- Cluster resource percentage, some amount of persistent memory capacity in the cluster is dedicated for failover purpose even if the virtual machines in the cluster are not using persistent memory currently. This percentage can either be specified through an override, or it is automatically calculated according to the host failures to tolerate setting. When PMem admission control is enabled, PMem capacity is reserved across the cluster even if there are VMs using PMem as disks.

- Slot Policy (powered-on VMs), persistent memory admission control overrides the Slot Policy with the Cluster Resource Percentage policy, for persistent memory resources only. The percentage value is automatically calculated from the host failures cluster tolerates setting and cannot be overriden.

- Dedicated failover hosts, the persistent memory of the dedicated failover hosts is dedicated for failover purpose and you cannot provision virtual machines with persistent memory on these hosts.

vSphere Memory Monitoring and Remediation

vMMR collects data and provides visibility of performance statistics so you can determine if your application workload is regressed due to Memory Mode.

Intel Optane Persistent Memory can be configured in BIOS settings in App Direct or Memory Mode. In App Direct mode, persistent memory can be accessed as byte addressable, persistent memory along with DRAM. In Memory Mode, DRAM becomes the hardware cache and the larger PMem becomes volatile and appears as system memory.

Memory Mode is invisible and transparent to VMs. Once you configure the system in Memory Mode, the system appears as a traditional system with DRAM. A cluster can have a mix of hosts with different configurations. vSphere shows additional information about the system being in Memory Mode. ESXi programs performance counters that gather information about host level and VM level statistics. These performance statistics are used to create alarms. Statistics are also tracked in performance charts.

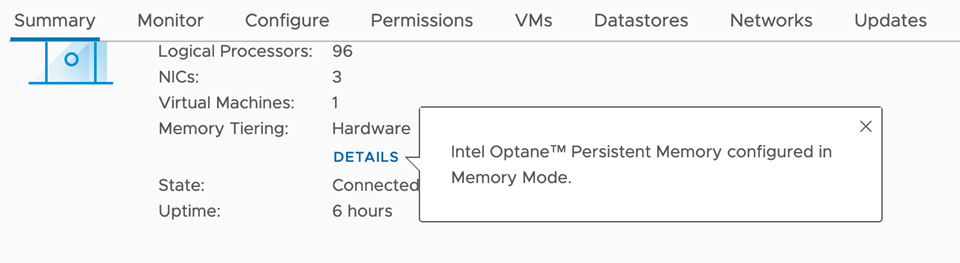

You can find out if the system is in Memory Mode in the host Summary tab, Memory Tiering: Hardware with some additional details.

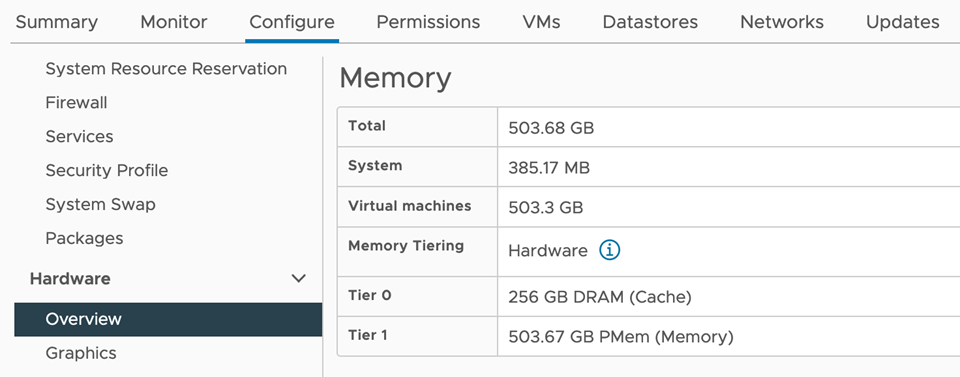

You can also view the size of DRAM and PMEM under .

ESXi gathers and exposes two kinds of memory statistics:

- Host Level Statistics: A memory sub-component measures DRAM and PMem performance by programming performance counters. The host level statistics are total, read/write bandwidth, read/write latency and miss rate for the different memory types (DRAM, PMem).

- VM Level Statistics: vSphere monitors performance counters to get data on DRAM and PMEM read bandwidth of the VM.

Both Host and VM have new Memory pane under Performance charts. This shows memory details like Memory Utilization and Memory Reclamation along with new statistics. On the ESXi host level, you can monitor the Memory Bandwidth and Memory Miss Rate charts. On the VM level, you can view the PMem read bandwidth and DRAM read bandwidth.

From the VMs tab of an ESXi host, you can view a list containing performance information about all virtual machines that reside on the host. To display information about the Memory Mode impact on a virtual machine, click the view columns ( ) icon and select the Active Memory, DRAM Read Bandwidth, and PMem Read Bandwidth metrices.

) icon and select the Active Memory, DRAM Read Bandwidth, and PMem Read Bandwidth metrices.

There are two preconfigured default alarms, one at the host level (Host Memory Mode High Active DRAM Usage) and another at the VM level (Virtual Machine High PMem Bandwidth Usage). If the alarm condition is met, an event will be published to trigger the corresponding alarm. You can also create custom alarms based on performance metrics. vMMR alarms only work on hosts configured with Memory Mode.

When DRS is enabled and fully automated in the cluster, if the active memory utilization of the host is above certain percentage of the size of the DRAM cache, DRS might move some VMs out of the host in order to balance the load.

For more information, see vSphere Monitoring and Performance.