Supervisor components, applications, and workloads need to store and retrieve data. Some applications and objects might use transient fast storage, while others require storage that persists.

About Storage Policies

Supervisor uses storage policies to integrate with storage available in your vSphere environment. The policies represent datastores and manage storage placement of such components and objects as control plane VMs, vSphere Pod ephemeral disks, and container images. You might also need policies for storage placement of persistent volumes and VM content libraries. If you use TKG clusters, the storage policies also dictate how the TKG cluster nodes are deployed.

Storage policies support any shared datastores in your environment, such as VMFS, NFS, vSAN, including vSAN ESA, or vVols.

Depending on your vSphere storage environment and the needs of DevOps, you can create several storage policies for different classes of storage. When you enable a Supervisor and set up namespaces, you can assign different storage policies to be used by various objects, components, and workloads.

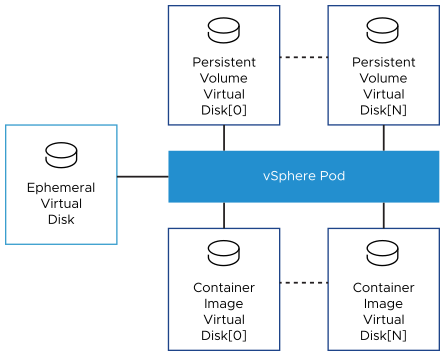

For example, if a vSphere Pod mounts three types of virtual disks and your vSphere storage environment has three classes of datastores, Bronze, Silver, and Gold, you can create storage policies for all datastores. You can then use the Bronze datastore for ephemeral and container image virtual disks, and use the Silver and Gold datastores for persistent volume virtual disks.

For information about creating storage policies, see Create Storage Policies in the Installing and Configuring vSphere IaaS Control Plane documentation.

For general information about storage policies, see the Storage Policy Based Management chapter in the vSphere Storage documentation.

Storage Policies for a Supervisor

At the Supervisor level, you configure a storage policy for Supervisor control plane VMs. In addition, if your deployment supports vSphere Pods, you assign storage polices and specify the datastore locations for ephemeral disks and container images. For information about setting storage when enabling Supervisor, see the Installing and Configuring vSphere IaaS Control Plane documentation. To change storage settings, see Change Storage Settings on the Supervisor.

- Control Plane Storage Policy

- This policy ensures that the control plane VMs are placed on the datastores that the policies represent.

- Ephemeral Virtual Disks

-

A

vSphere Pod requires ephemeral storage to store such Kubernetes objects as logs,

emptyDir volumes, and

ConfigMaps during its operations. This ephemeral, or transient, storage lasts as long as the pod continues to exist. Ephemeral data persists across container restarts, but once the pod reaches the end of its life, the ephemeral virtual disk disappears.

Each pod has one ephemeral virtual disk. As a vSphere administrator you use a storage policy to define the datastore location for all ephemeral virtual disks when configuring storage for the Supervisor.

- Container Image Virtual Disks

-

Containers inside the

vSphere Pod use images that contain the software to be run. The pod mounts images used by its containers as image virtual disks. When the pod completes its life cycle, the image virtual disks are detached from the pod.

Image Service, an ESXi component, is responsible for pulling container images from the image registry and transforming them into virtual disks to run inside the pod.

ESXi can cache images that are downloaded for the containers running in the pod. Subsequent pods that use the same image pull it from the local cache rather than the external container registry.

Persistent Storage for Workloads

Certain Kubernetes workloads that DevOps run on a namespace require persistent storage to store data permanently.

Persistent storage can be used by vSphere Pods, TKG clusters, VMs, and other workloads you run on the namespace. To make persistent storage available to the DevOps team, the vSphere administrator creates storage policies that describe different storage requirements and classes of services. The administrator then assigns storage policies and configures storage limits at a namespace level.

To understand how vSphere IaaS control plane works with persistent storage, be familiar with the such essential Kubernetes concepts as storage classes, persistent volumes, and persistent volume claims. For more information, see the Kubernetes documentation at https://kubernetes.io/docs/home/.

To learn about persistent storage for TKG cluster, see Storage for TKG Clusters.

For information about using persistent storage, see Using Persistent Storage with Workloads in the vSphere IaaS Control Plane Services and Workloads documentation.

If your DevOps team plans to deploy third-party services that use vSAN Direct for their persistent storage needs, see Enable Stateful Services in the vSphere IaaS Control Plane Services and Workloads documentation.

How Supervisor Integrates with vSphere Storage

Supervisor uses several components to integrate with vSphere storage.

- Cloud Native Storage (CNS) on vCenter Server

- The CNS component resides in vCenter Server. It is an extension of vCenter Server management that implements provisioning and lifecycle operations for persistent volumes.

- First Class Disk (FCD)

-

Also called Improved Virtual Disk. These disks reside on datastores and back ReadWriteOnce persistent volumes.

When you use FCDs, keep in mind the following:

- FCDs do not support NFS 4.x protocols. Instead, use NFS 3.

- vCenter Server does not serialize operations on the same FCD. As a result, applications cannot simultaneously perform operations on the same FCD. Performing such operations as clone, relocate, delete, retrieve, and so on simultaneously from different threads causes unpredictable results. To avoid problems, applications must perform operations on the same FCD in a sequential order.

- FCD is not a managed object and does not support a global lock protecting multiple writes to a single FCD. As a result, FCD doesn't support multiple vCenter Server instances managing the same FCD. If you need to use multiple vCenter Server instances with FCDs, you have the following options:

- Multiple vCenter Server instances can manage different datastores.

- Multiple vCenter Server instances do not operate on the same FCD.

- Storage Policy Based Management

- Storage Policy Based Management is a vCenter Server service that supports provisioning of persistent volumes and their backing virtual disks according to storage requirements described in a storage policy. After provisioning, the service monitors compliance of the volume with the storage policy characteristics. For more information about Storage Policy Based Management, see the Storage Policy Based Management chapter in the vSphere Storage documentation.

- vSphere CNS-CSI

- The vSphere CNS-CSI component conforms to Container Storage Interface (CSI) specification, an industry standard designed to provide an interface that container orchestrators like Kubernetes use to provision persistent storage. The CNS-CSI driver runs in the Supervisor and connects vSphere storage to Kubernetes environment on a namespace. The vSphere CNS-CSI communicates directly with the CNS component for all storage provisioning requests that originate from the namespace.

Functionality Supported by vSphere CNS-CSI

The vSphere CNS-CSI component that runs in the Supervisor supports multiple vSphere and Kubernetes storage features. However, certain limitations apply.

| Supported Functionality | vSphere CNS-CSI with Supervisor |

|---|---|

| CNS Support in the vSphere Client | Yes |

| Enhanced Object Health in the vSphere Client | Yes (vSAN only) |

| Dynamic Block Persistent Volume (ReadWriteOnce Access Mode) | Yes |

| Dynamic File Persistent Volume (ReadWriteMany Access Mode) | No |

| vSphere Datastore | VMFS, NFS, vSAN (including vSAN ESA), vVols |

| Static Persistent Volume | Yes |

| Encryption | No |

| Offline Volume Expansion | Yes |

| Online Volume Expansion | Yes |

| Volume Topology and Zones | Yes. Volumes can be consumed only by TKG clusters. |

| Kubernetes Multiple Control Plane Instances | Yes |

| WaitForFirstConsumer | No |

| VolumeHealth | Yes |

| Storage vMotion with Persistent Volumes | No |

Persistent Storage and Supervisor with vSphere Zones

A three-zone Supervisor supports zonal storage, where a datastore is shared across all hosts in a single zone.

- Storage in all three zones does not need to be of the same type. However, having uniform storage in all three clusters provides a consistent performance.

- For the namespace on the three-zone Supervisor, use a storage policy that is compliant with the shared storage in each of the clusters. The storage policy must be topology aware.

- Do not remove topology constraints from the storage policy after assigning it to the namespace.

- Do not mount zonal datastores on other zones.

- A three-zone Supervisor does not support the following items:

- Cross-zonal volumes

- vSAN File volumes (ReadWriteMany Volumes)

- Static volume provisioning using Register Volume API

- Workloads that use vSAN Data Persistence platform

- vSphere Pod

- vSAN Stretched Clusters

- VMs with vGPU and instance storage

For more information, see Using Persistent Storage on a Three-Zone Supervisor in the vSphere IaaS Control Plane Services and Workloads documentation.