The management domain in your environment must be upgraded before you upgrade VI workload domains. In order to upgrade to VMware Cloud Foundation 4.5.x, all VI workload domains in your environment must be at VMware Cloud Foundation 4.2.1 or higher. If your environment is at a version lower than 4.2.1, you must upgrade the workload domains to 4.2.1 and then upgrade to 4.5.x.

- NSX-T.

- vCenter Server.

- If you have stretched clusters in your environment, upgrade the vSAN witness host. See Upgrade vSAN Witness Host for VMware Cloud Foundation.

- ESXi.

- Workload Management on clusters that have vSphere with Tanzu. Workload Management can be upgraded through vCenter Server. See Updating the vSphere with Tanzu Environment.

- If you suppressed the Enter Maintenance Mode prechecks for ESXi or NSX, delete the following lines from the /opt/vmware/vcf/lcm/lcm-app/conf/application-prod.properties file and restart the LCM service:

lcm.nsxt.suppress.dry.run.emm.check=true

lcm.esx.suppress.dry.run.emm.check.failures=true

- For NFS-based workload domains, add a static route for hosts to access NFS storage over the NFS gateway. See Post Upgrade Steps for NFS-Based VI Workload Domains.

- Remove the VM snapshots you took before starting the update.

- Take a backup of the newly installed components.

VMware Cloud Foundation Upgrade Prerequisites

Before you upgrade VMware Cloud Foundation, make sure that the following prerequisites are met.

- Take a backup of the SDDC Manager appliance using an external SFTP server. See the "Backup and Restore of VMware Cloud Foundation" section in the VMware Cloud Foundation Administration Guide.

- Before you upgrade a vCenter Server, take a file-based backup. See Manually Back Up vCenter Server.

- No domain operations are in progress. Domain operations include creating VI workload domains, expanding a workload domain (adding a cluster or host), and shrinking a workload domain (removing a cluster or host).

- Download the relevant bundles. See Downloading VMware Cloud Foundation Upgrade Bundles.

Note: If you downloaded the bundles manually, you must download all bundles for the target release and upload them to the SDDC Manager appliance before starting the upgrade.

- If you applied an async patch to your current VMware Cloud Foundation instance you must use the Async Patch Tool to enable an upgrade to a later version of VMware Cloud Foundation. For example, if you applied an async vCenter Server patch to a VMware Cloud Foundation 4.3.1 instance, you must use the Async Patch Tool to enable upgrade to VMware Cloud Foundation 4.5.x. See Async Patch Tool.

- Make sure that there are no failed workflows in your system and none of the VMware Cloud Foundation resources are in activating or error state.

Caution: If any of these conditions are true, contact VMware Support before starting the upgrade.

- Ensure that passwords for all VMware Cloud Foundation components are valid.

- Review the Release Notes for known issues related to upgrades.

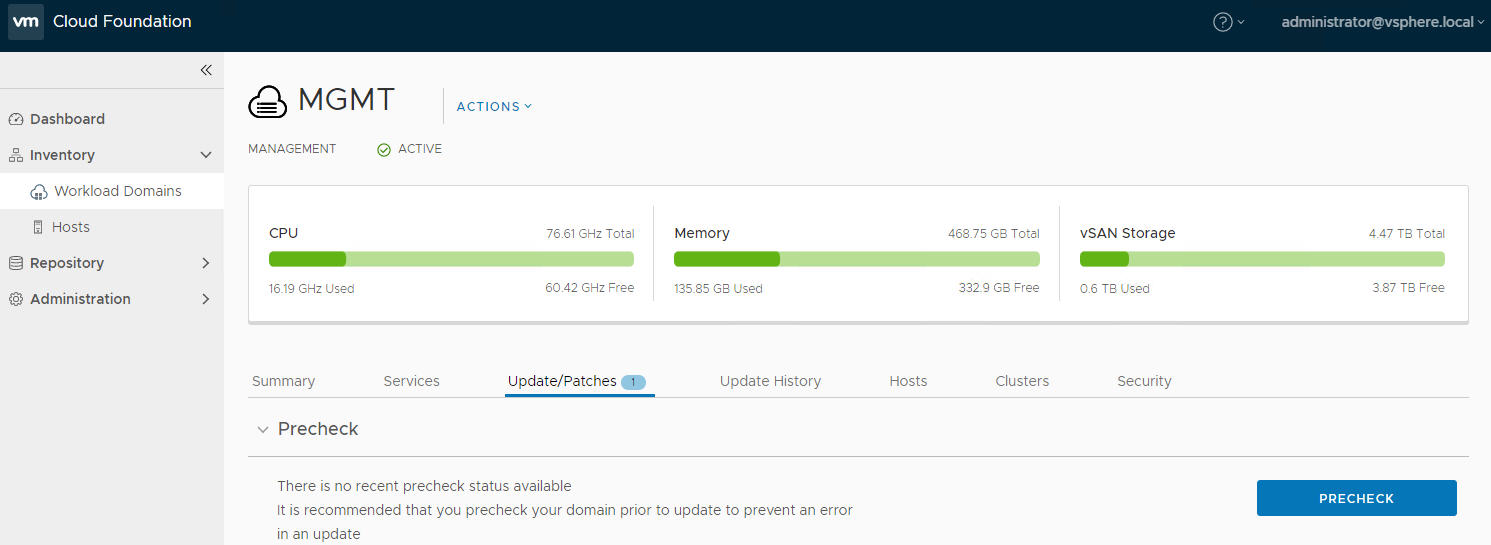

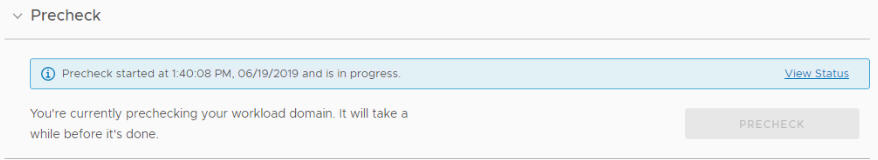

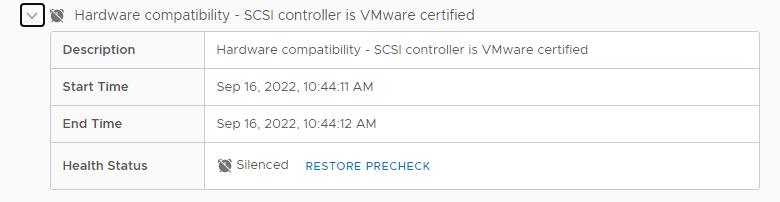

Perform Update Precheck

You must perform a precheck before applying an update or upgrade bundle to ensure that your environment is ready for the update.

- Custom ISO is compatible with your environment.

- Custom ISO size is smaller than the boot partition size.

- Third party VIBs are compatible with the environment.

Procedure

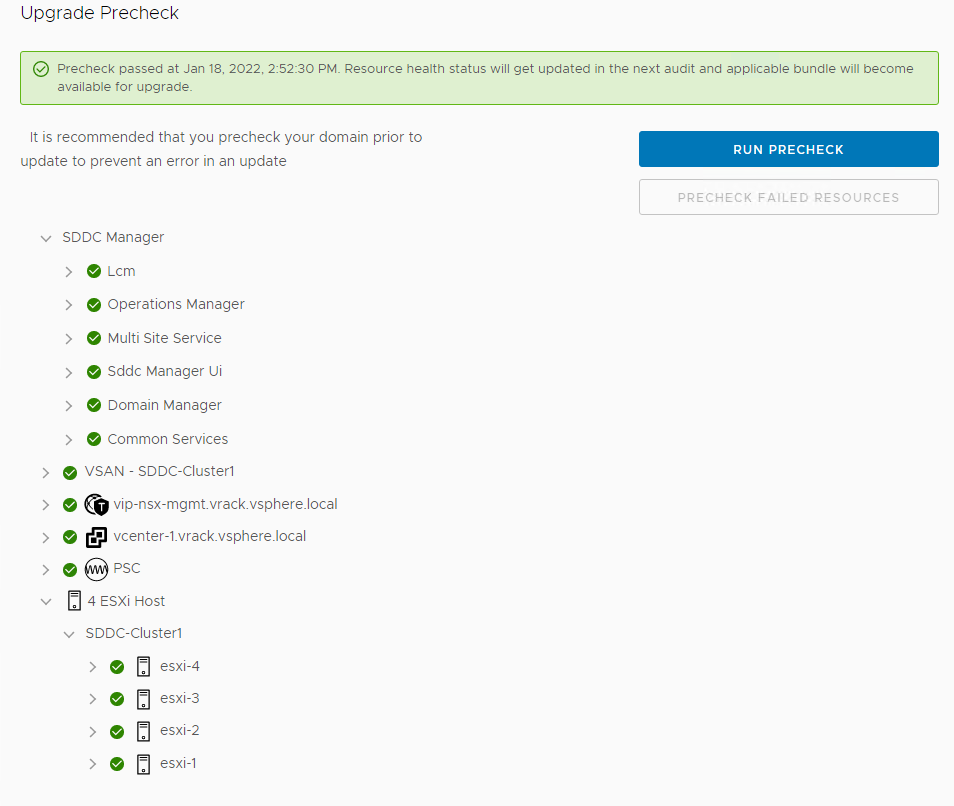

Results

The precheck result is displayed at the top of the Upgrade Precheck Details window. If you click Exit Details, the precheck result is displayed at the top of the Precheck section in the Updates/Patches tab.

Ensure that the precheck results are green before proceeding. A failed precheck may cause the update to fail.

Upgrade NSX-T Data Center for VMware Cloud Foundation

Upgrade NSX-T Data Center in the management domain before you upgrade VI workload domains.

- Upgrade Coordinator

- NSX Edge clusters (if deployed)

- NSX Edge

- Host clusters

- NSX Manager cluster

VI workload domains can share the same NSX Manager cluster and NSX Edge clusters. When you upgrade these components for one VI workload domain, they are upgraded for all VI workload domains that share the same NSX Manager or NSX Edge cluster. You cannot perform any operations on the VI workload domains while NSX-T is being upgraded.

Prerequisites

- Validate that the NSX Manager password is valid.

- Download the upgrade bundle for NSX-T Data Center. See Downloading VMware Cloud Foundation Upgrade Bundles.

- Back up the NSX-T Data Center configuration and download the technical support logs.

- Ensure that the appropriate communication ports are open between the NSX Edge nodes and NSX Manager. For more information on ports, see https://ports.esp.vmware.com/home/NSX.

- Review Operational Impacts of NSX-T Data Center Upgrade in NSX Upgrade Guide to understand the impact that each component upgrade might have on your environment.

- Ensure there are no active alarms on hosts or vSphere clusters using the vSphere Client.

- Customers upgrading to NSX-T 3.2.1.2 are strongly encouraged to run the NSX Upgrade Evaluation Tool before starting the upgrade process. The tool is designed to ensure success by checking the health and readiness of your NSX Managers prior to upgrading.

Procedure

Results

When all NSX-T Data Center workload components are upgraded successfully, a message with a green background and check mark is displayed.

Upgrade NSX-T Data Center for VMware Cloud Foundation in a Federated Environment

When NSX Federation is configured between two VMware Cloud Foundation instances, SDDC Manager does not manage the lifecycle of the NSX Global Managers. To upgrade the NSX Global Managers, you must first follow the standard lifecycle of each VMware Cloud Foundation instance using SDDC Manager, and then manually upgrade the NSX Global Managers for each instance.

Download NSX Global Manager Upgrade Bundle

SDDC Manager does not manage the lifecycle of the NSX Global Managers. You must download the NSX-T Data Center upgrade bundle manually to upgrade the NSX Global Managers.

Procedure

Upgrade the Upgrade Coordinator for NSX Federation

The upgrade coordinator runs in the NSX Manager. It is a self-contained web application that orchestrates the upgrade process of hosts, NSX Edge cluster, NSX Controller cluster, and the management plane.

The upgrade coordinator guides you through the upgrade sequence. You can track the upgrade process and, if necessary, you can pause and resume the upgrade process from the UI.

Procedure

Upgrade NSX Global Managers for VMware Cloud Foundation

Manually upgrade the NSX Global Managers when NSX Federation is configured between two VMware Cloud Foundation instances.

Prerequisites

Procedure

Upgrade vCenter Server for VMware Cloud Foundation

The upgrade bundle for VMware vCenter Server is used to upgrade the vCenter Servers managed by SDDC Manager. Upgrade vCenter Server in the management domain before upgrading vCenter Server in VI workload domains.

Prerequisites

- Download the VMware vCenter Server upgrade bundle. See Downloading VMware Cloud Foundation Upgrade Bundles.

- Take a file-based backup of the vCenter Server appliance before starting the upgrade. See Manually Back Up vCenter Server.

Note: After taking a backup, do not make any changes to the vCenter Server inventory or settings until the upgrade completes successfully.

- If your workload domain contains Workload Management (vSphere with Tanzu) enabled clusters, the supported target release depends on the version of Kubernetes (K8s) currently running in the cluster. Older versions of K8s may require a specific upgrade sequence. See KB 88962 for more information.

Procedure

What to do next

Upgrade vSAN Witness Host for VMware Cloud Foundation

If your VMware Cloud Foundation environment contains stretched clusters, update and remediate the vSAN witness host.

Prerequisites

Download the ESXi ISO that matches the version listed in the the Bill of Materials (BOM) section of the VMware Cloud Foundation Release Notes.

Procedure

- In a web browser, log in to vCenter Server at https://vcenter_server_fqdn/ui.

- Upload the ESXi ISO image file to vSphere Lifecycle Manager.

- Click .

- Click the Imported ISOs tab.

- Click Import ISO and then click Browse.

- Navigate to the ESXi ISO file you downloaded and click Open.

- After the file is imported, click Close.

- Create a baseline for the ESXi image.

- On the Imported ISOs tab, select the ISO file that you imported, and click New baseline.

- Enter a name for the baseline and specify the Content Type as Upgrade.

- Click Next.

- Select the ISO file you had imported and click Next.

- Review the details and click Finish.

- Attach the baseline to the vSAN witness host.

- Click .

- In the Inventory panel, click .

- Select the vSAN witness host and click the Updates tab.

- Under Attached Baselines, click .

- Select the baseline that you had created in step 3 and click Attach.

- Click Check Compliance.

After the compliance check is completed, the Status column for the baseline is displayed as Non-Compliant.

- Remediate the vSAN witness host and update the ESXi hosts that it contains.

- Right-click the vSAN witness and click .

- Click OK.

- Click the Updates tab.

- Select the baseline that you had created in step 3 and click Remediate.

- In the End user license agreement dialog box, select the check box and click OK.

- In the Remediate dialog box, select the vSAN witness host, and click Remediate.

The remediation process might take several minutes. After the remediation is completed, the Status column for the baseline is displayed as Compliant.

- Right-click the vSAN witness host and click .

- Click OK.

Upgrade ESXi with vSphere Lifecycle Manager Baselines for VMware Cloud Foundation

The management domain uses vSphere Lifecycle Manager baselines for ESXi host upgrades. VI workload domains can use vSphere Lifecycle Manager baselines or vSphere Lifecycle Manager images. The following procedure describes upgrading ESXi hosts in workload domains that use vSphere Lifecycle Manager baselines.

For information about upgrading ESXi in VI workload domains that use vSphere Lifecycle Manager images, see Upgrade ESXi with vSphere Lifecycle Manager Images for VMware Cloud Foundation.

By default, the upgrade process upgrades the ESXi hosts in all clusters in a workload domain in parallel. If you have multiple clusters in the management domain or in a VI workload domain, you can select the clusters to upgrade.

If you want to skip any hosts while applying an ESXi update to the management domain or a VI workload domain, you must add these hosts to the application-prod.properties file before you begin the update. See "Skip Hosts During ESXi Update".

To perform ESXi upgrades with custom ISO images or async drivers see "Upgrade ESXi with Custom ISOs" and "Upgrade ESXi with Stock ISO and Async Drivers".

If you are using external (non-vSAN) storage, the following procedure updates the ESXi hosts attached to the external storage. However, updating and patching the storage software and drivers is a manual task and falls outside of SDDC Manager lifecycle management. To ensure supportability after an ESXi upgrade, consult the vSphere HCL and your storage vendor.

Prerequisites

- Validate that the ESXi passwords are valid.

- Download the ESXi bundle. See Downloading VMware Cloud Foundation Upgrade Bundles.

- Ensure that the domain for which you want to perform cluster-level upgrade does not have any hosts or clusters in an error state. Resolve the error state or remove the hosts and clusters with errors before proceeding.

Procedure

What to do next

Skip Hosts During ESXi Update

You can skip hosts while applying an ESXi update to the management domain or a VI workload domain. The skipped hosts are not updated.

Procedure

Results

The hosts added to the application-prod.properties are not updated when you update the workload domain.

Upgrade ESXi with Custom ISOs

For clusters in workload domains with vSphere Lifecycle Manager baselines, you can upgrade ESXi with a custom ISO from your vendor. VMware Cloud Foundation 4.4.1.1 and later support multiple custom ISOs in a single ESXi upgrade in cases where specific clusters or workload domains require different custom ISOs.

Prerequisites

Download the appropriate vendor-specific ISOs on a computer with internet access. If no vendor-specific ISO is available for the required version of ESXi, then you can create one. See Create a Custom ISO Image for ESXi.

Procedure

Upgrade ESXi with VMware Cloud Foundation Stock ISO and Async Drivers

For clusters in workload domains with vLCM baselines, you can apply the stock ESXi upgrade bundle with specified async drivers.

Prerequisites

Download the appropriate async drivers for your hardware on a computer with internet access.

Procedure

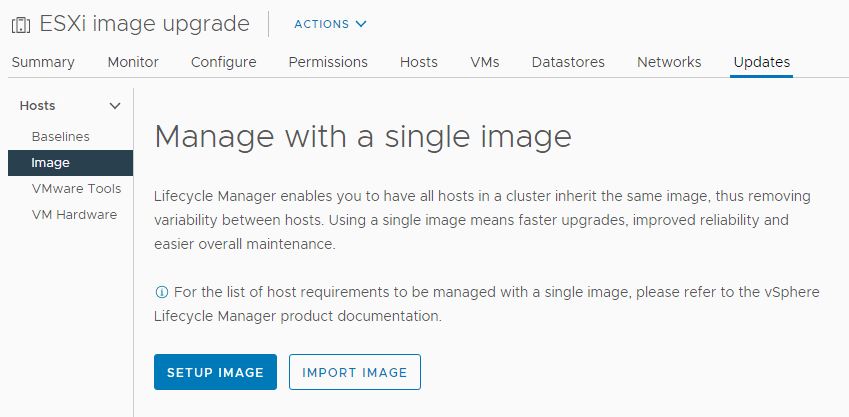

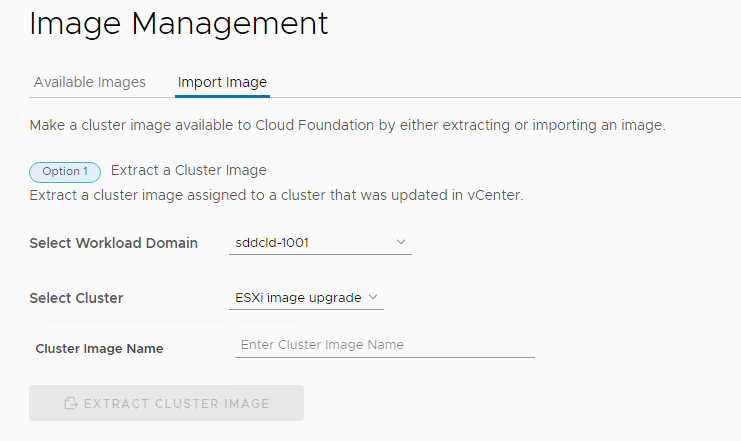

Upgrade ESXi with vSphere Lifecycle Manager Images for VMware Cloud Foundation

VI workload domains can use vSphere Lifecycle Manager baselines or vSphere Lifecycle Manager images for ESXi host upgrade. The following procedure describes upgrading ESXi hosts in workload domains that use vSphere Lifecycle Manager images.

For information about upgrading ESXi in workload domains that use vSphere Lifecycle Manager baselines, see Upgrade ESXi with vSphere Lifecycle Manager Baselines for VMware Cloud Foundation.

You create a vSphere Lifecycle Manager image for upgrading ESXi hosts using the vSphere Client. During the creation of the image, you define the ESXi version and can optionally add vendor add-ons, components, and firmware. After you extract the vSphere Lifecycle Manager image into SDDC Manager, the ESXi update will be available for the relevant VI workload domains.

Prerequisites

- Validate that the ESXi passwords are valid.

- Ensure that the domain for which you want to perform cluster-level upgrade does not have any hosts or clusters in an error state. Resolve the error state or remove the hosts and clusters with errors before proceeding.

- You must upgrade NSX and vCenter Server before you can upgrade ESXi hosts with a vSphere Lifecycle Manager image.

- If you want to add firmware to the vSphere Lifecycle Manager image, you must install the Hardware Support Manager from your vendor. See Firmware Updates.

Procedure

What to do next

Firmware Updates

You can use vSphere Lifecycle Manager images to perform firmware updates on the ESXi hosts in a cluster. Using a vSphere Lifecycle Manager image simplifies the host update operation. With a single operation, you update both the software and the firmware on the host.

To apply firmware updates to hosts in a cluster, you must deploy and configure a vendor provided software module called hardware support manager. The deployment method and the management of a hardware support manager is determined by the respective OEM. For example, the hardware support manager that Dell EMC provides is part of their host management solution, OpenManage Integration for VMware vCenter (OMIVV), which you deploy as an appliance. See Deploying Hardware Support Managers.

You must deploy the hardware support manager appliance on a host with sufficient disk space. After you deploy the appliance, you must power on the appliance virtual machine, log in to the appliance as an administrator, and register the appliance as a vCenter Server extension. Each hardware support manager has its own mechanism of managing firmware packages and making firmware add-ons available for you to choose.

For detailed information about deploying, configuring, and managing hardware support managers, refer to the vendor-provided documentation.

Post Upgrade Steps for NFS-Based VI Workload Domains

After upgrading VI workload domains that use NFS storage, you must add a static route for hosts to access NFS storage over the NFS gateway. This process must be completed before expanding the workload domain.