This topic describes the hardware requirements for production deployments of VMware Tanzu Kubernetes Grid Integrated Edition (TKGI) on vSphere with NSX.

vSphere Cluster Requirements

A vSphere cluster is a collection of ESXi hosts and associated virtual machines (VMs) with shared resources and a shared management interface. Installing Tanzu Kubernetes Grid Integrated Edition on vSphere with NSX requires the following vSphere clusters:

- Tanzu Kubernetes Grid Integrated Edition Management Cluster

- Tanzu Kubernetes Grid Integrated Edition Edge Cluster

- Tanzu Kubernetes Grid Integrated Edition Compute Cluster

For more information on creating vSphere clusters, see Creating Clusters in the vSphere documentation.

Management Cluster

The Tanzu Kubernetes Grid Integrated Edition Management Cluster on vSphere comprises the following components:

- vCenter Server

- NSX-T Manager v3.0 or later (quantity 3)

For more information, see Installing and Configuring NSX-T Data Center v3.0 for TKGI.

Edge Cluster

A TKGI Edge Cluster on vSphere with NSX is comprised of two or more NSX Edge Nodes. The maximum supported number of Edge Nodes per TKGI Edge Cluster is 10.

For more information, see Installing and Configuring NSX-T Data Center v3.0 for TKGI.

Compute Cluster

The Tanzu Kubernetes Grid Integrated Edition Compute Cluster on vSphere comprises the following components:

- Kubernetes control plane nodes (quantity 3)

- Kubernetes worker nodes

For more information, see Installing and Configuring NSX-T Data Center v3.0 for TKGI.

Management Plane Placement

The Tanzu Kubernetes Grid Integrated Edition Management Plane comprises the following components:

- Ops Manager

- BOSH Director

- TKGI Control Plane

- VMware Harbor Registry

Depending on your design choice, TKGI management components can be deployed in the Tanzu Kubernetes Grid Integrated Edition Management Cluster on the standard vSphere network or in the Tanzu Kubernetes Grid Integrated Edition Compute Cluster on the NSX-defined virtual network. For more information, see NSX Deployment Topologies for Tanzu Kubernetes Grid Integrated Edition.

vSphere Cluster Configuration Requirements

For each vSphere cluster defined for Tanzu Kubernetes Grid Integrated Edition, the following configurations are required to support production workloads:

-

All vSphere clusters are managed by the same vCenter server. TKGI does not support workload clusters in multiple vCenter server inventories.

-

The vSphere Distributed Resource Scheduler (DRS) is enabled. For more information, see Creating a DRS Cluster in the vSphere documentation.

-

The DRS custom automation level is set to Partially Automated or Fully Automated. For more information, see Set a Custom Automation Level for a Virtual Machine in the vSphere documentation.

-

vSphere high-availability (HA) is enabled. For more information, see Creating and Using vSphere HA Clusters in the vSphere documentation.

-

vSphere HA Admission Control (AC) is configured to support one ESXi host failure. For more information, see Configure Admission Control in the vSphere documentation.

Specifically:- Host failure: Restart VMs

- Admission Control: Host failures cluster tolerates = 1

RPD Topology with NSX

The recommended production deployment (RPD) topology represents the VMware-recommended configuration to run production workloads in Tanzu Kubernetes Grid Integrated Edition on vSphere with NSX.

Note: The RPD differs depending on whether you are using vSAN or not.

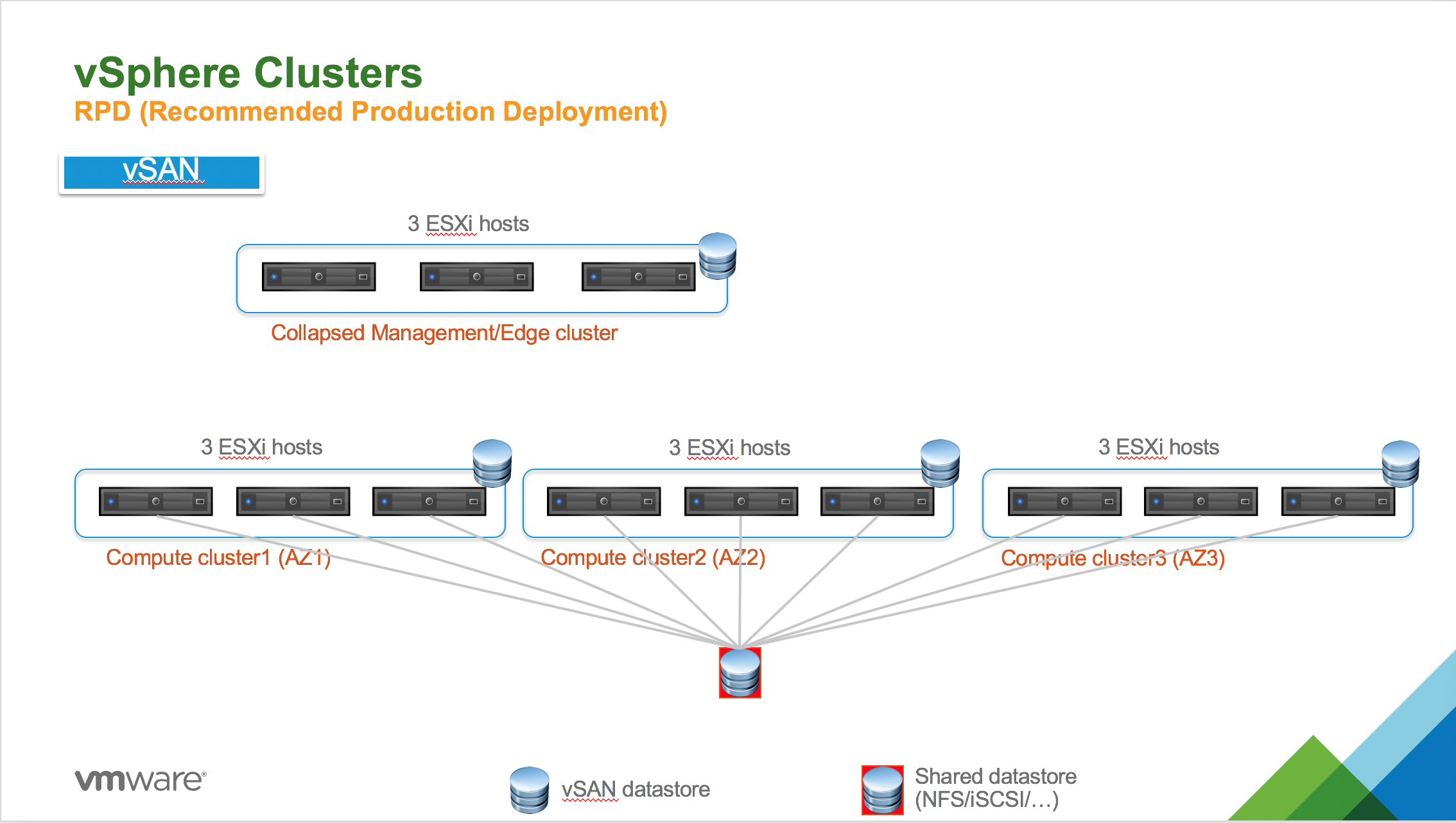

RPD with vSAN

The RPD for Tanzu Kubernetes Grid Integrated Edition with vSAN storage requires 12 ESXi hosts. The diagram below shows the topology for this deployment.

The following subsections describe configuration details for the RPD with vSAN topology.

Management/Edge Cluster

The RPD with vSAN topology includes a Management/Edge Cluster with the following characteristics:

- Collapsed Management/Edge Cluster with three ESXi hosts.

- Each ESXi host runs one NSX Manager. The NSX Control Plane has three NSX Managers total.

- Two NSX Edge Nodes are deployed across two different ESXi hosts.

Compute Clusters

The RPD with vSAN topology includes three Compute Clusters with the following characteristics:

- Each Compute cluster has three ESXi hosts and is bound by a distinct availability zone (AZ) defined in BOSH Director.

- Compute cluster1 (AZ1) with three ESXi hosts.

- Compute cluster2 (AZ2) with three ESXi hosts.

- Compute cluster3 (AZ3) with three ESXi hosts.

- Each Compute cluster runs one instance of an Tanzu Kubernetes Grid Integrated Edition-provisioned Kubernetes cluster with three control plane nodes per cluster and a per-plan number of worker nodes.

Storage (vSAN)

The RPD with vSAN topology requires the following storage configuration:

- Each Compute Cluster is backed by a vSAN datastore

- An external shared datastore (using NFS or iSCSI, for instance) must be provided to store Kubernetes Pod PV (Persistent Volumes).

- Three ESXi hosts are required per Compute cluster because of the vSAN cluster requirements. For data protection, vSAN creates two copies of the data and requires one witness.

For more information on using vSAN with Tanzu Kubernetes Grid Integrated Edition, see PersistentVolume Storage Options on vSphere.

Future Growth

The RPD with vSAN topology can be scaled as follows to accommodate future growth requirements:

- The collapsed Management/Edge Cluster can be expanded to include up to 64 ESXi hosts.

- Each Compute Cluster can be expanded to include up to 64 ESXi hosts.

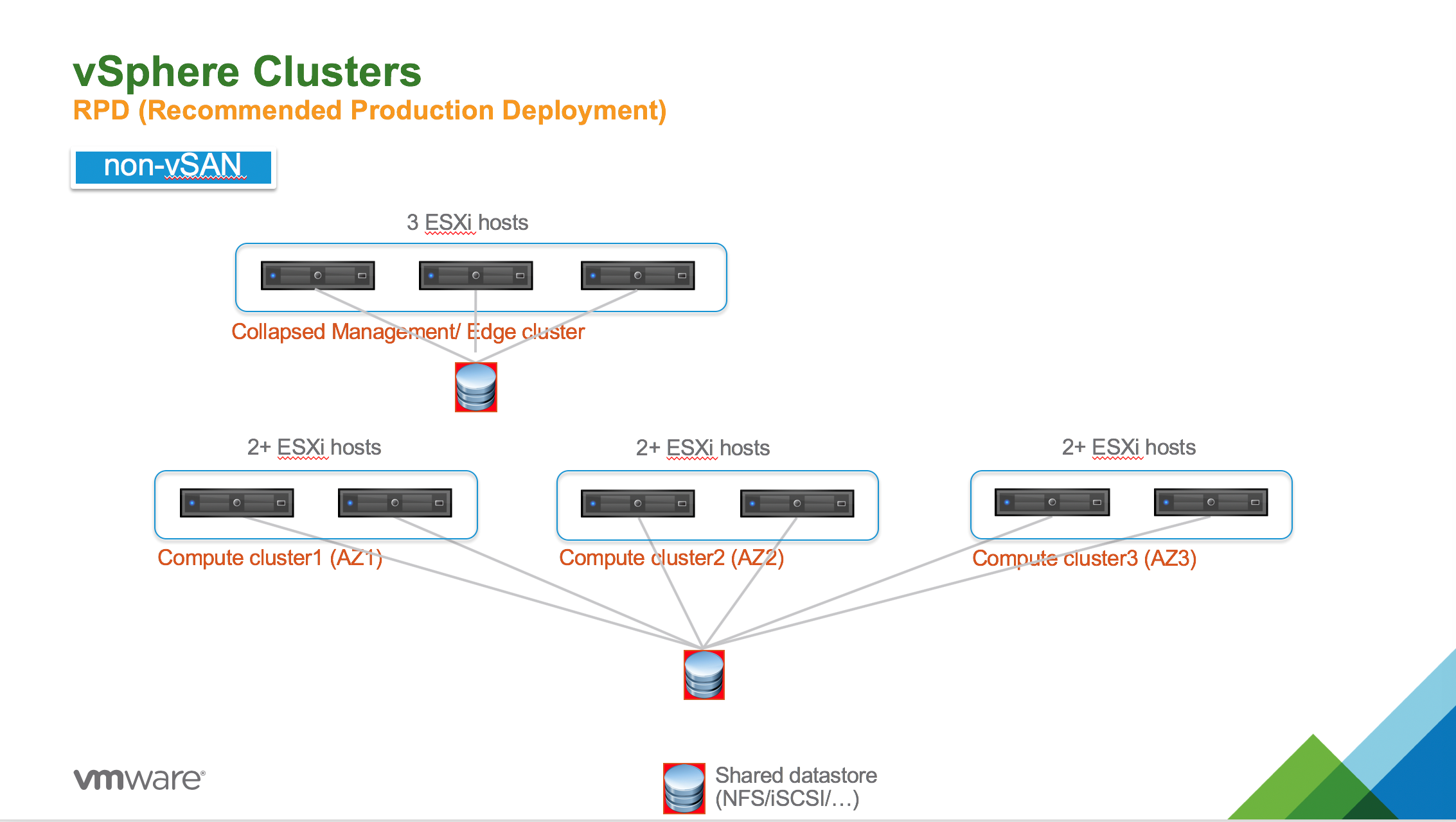

RPD without vSAN

The RPD for Tanzu Kubernetes Grid Integrated Edition without vSAN storage requires nine ESXi hosts. The diagram below shows the topology for this deployment.

The following subsections describe configuration details for the RPD of Tanzu Kubernetes Grid Integrated Edition without vSAN.

Management/Edge Cluster

The RPD without vSAN includes a Management/Edge Cluster with the following characteristics:

- Collapsed Management/Edge Cluster with three ESXi hosts.

- Each ESXi host runs one NSX Manager. The NSX Control Plane has three NSX Managers total.

- Two NSX Edge Nodes are deployed across two different ESXi hosts.

Compute Clusters

The RPD without vSAN topology includes three Compute Clusters with the following characteristic:

- Each Compute cluster has two ESXi hosts and is bound by a distinct availability zone (AZ) defined in BOSH Director.

- Compute cluster1 (AZ1) with two ESXi hosts.

- Compute cluster2 (AZ2) with two ESXi hosts.

- Compute cluster3 (AZ3) with two ESXi hosts.

- Each Compute cluster runs one instance of a Tanzu Kubernetes Grid Integrated Edition-provisioned Kubernetes cluster with three control plane nodes per cluster and a per-plan number of worker nodes.

Storage (non-vSAN)

The RPD without vSAN topology requires the following storage configuration:

- All Compute Clusters are connected to same shared datastore that is used for persistent VM disks for Tanzu Kubernetes Grid Integrated Edition components and Persistent Volumes (PVs) for Kubernetes pods.

- All datastores can be collapses to single datastore, if needed.

Future Growth

The RPD without vSAN topology can be scaled as follows to accommodate future growth requirements:

- The collapsed Management/Edge Cluster can be expanded to include up to 64 ESXi hosts.

- Each Compute Cluster can be expanded to include up to 64 ESXi hosts.

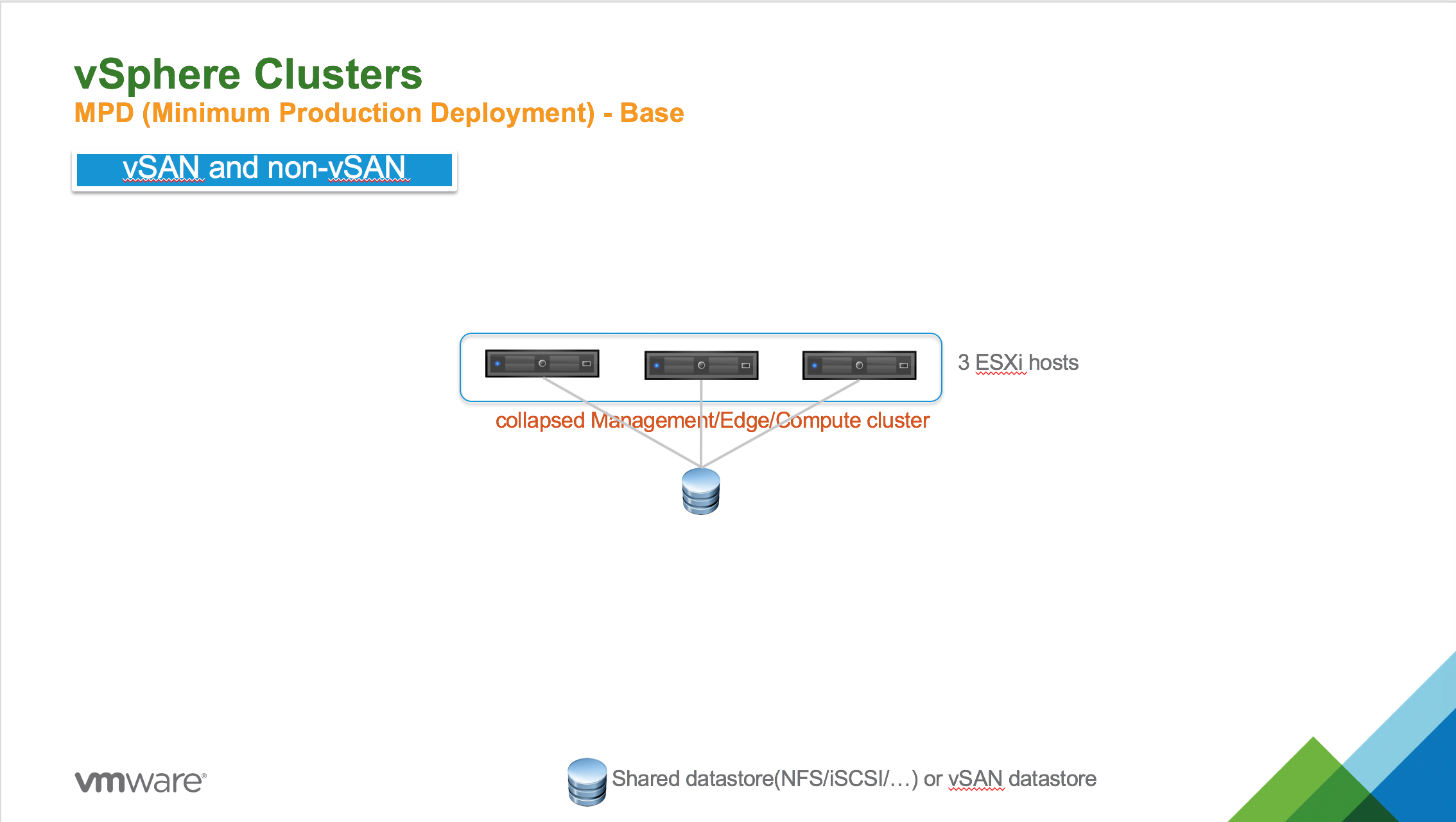

MPD Topology with NSX

The minimum production deployment (MPD) topology represents the baseline requirements for running Tanzu Kubernetes Grid Integrated Edition on vSphere with NSX.

Note: The MPD topology for Tanzu Kubernetes Grid Integrated Edition applies to both vSAN and non-vSAN environments.

The diagram below shows the topology for this deployment.

The following subsections describe configuration details for an MPD of Tanzu Kubernetes Grid Integrated Edition.

MPD Topology Requirements

The MPD topology for Tanzu Kubernetes Grid Integrated Edition requires the following minimum configuration:

- A single collapsed Management/Edge/Compute cluster running three ESXi hosts in total.

- Each ESXi host runs one NSX Manager. The NSX Control Plane has three NSX Managers in total.

- Each ESXi host runs one Kubernetes control plane node. Each Kubernetes cluster has three control plane nodes in total.

- Two NSX edge nodes are deployed across two different ESXi hosts.

- The shared datastore (NFS or iSCSI, for instance) or vSAN datastore is used for persistent VM disks for Tanzu Kubernetes Grid Integrated Edition components and Persistent Volumes (PVs) for Kubernetes pods.

- The collapsed Management/Edge/Compute cluster can be expanded to include up to 64 ESXi hosts.

Note: For an MPD deployment, each ESXi host must have four physical network interface controllers (PNICs). In addition, while a Tanzu Kubernetes Grid Integrated Edition deployment requires a minimum of three nodes, Tanzu Kubernetes Grid Integrated Edition upgrades require four ESXi hosts to ensure full survivability of the NSX Manager appliance.

MPD Topology Configuration

When configuring vSphere for an MPD topology for Tanzu Kubernetes Grid Integrated Edition, keep in mind the following requirements:

- When deploying the NSX Manager to each ESXi host, create a vSphere distributed resource scheduler (DRS) anti-affinity rule of type “separate virtual machines” for each of the three NSX Managers.

- When deploying the NSX Edge Nodes across two different ESXi hosts, create a DRS anti-affinity rule of type “separate virtual machines” for both Edge Node VMs.

- After deploying the Kubernetes cluster, you must manually make sure each control plane node is deployed to a different ESXi host by tuning the DRS anti-affinity rule of type “separate virtual machines.”

For more information on defining DRS anti-affinity rules, see Virtual Machine Storage DRS Rules in the vSphere documentation.

MPD Considerations

When planning an MPD topology for Tanzu Kubernetes Grid Integrated Edition, keep in mind the following:

- Leverage vSphere resource pools to allocate proper hardware resources for the Tanzu Kubernetes Grid Integrated Edition Management Plane components and tune reservation and resource limits accordingly.

- There is no fault tolerance for the Kubernetes cluster because Tanzu Kubernetes Grid Integrated Edition Availability Zones are not fully leveraged with this topology.

- Confirm at least the Tanzu Kubernetes Grid Integrated Edition AZ is mapped to a vSphere Resource Pool.

For more information, see Create Management Plane and Create IP Blocks and Pool for Compute Plane in Installing and Configuring NSX-T Data Center v3.0 for TKGI.

VM Inventory and Sizes

The following tables list the VMs and their sizes for deployments of Tanzu Kubernetes Grid Integrated Edition on vSphere with NSX.

Management Plane VMs and Sizes

The following table lists the resource requirements for NSX infrastructure and Tanzu Kubernetes Grid Integrated Edition Management Plane VMs.

| VM | CPU Cores | Memory (GB) | Ephemeral Disk (GB) |

|---|---|---|---|

| BOSH Director | 2 | 8 | 103 |

| Harbor Registry | 2 | 8 | 167 |

| Ops Manager | 1 | 8 | 160 |

| TKGI API | 2 | 8 | 64 |

| TKGI Database | 2 | 8 | 64 |

| NSX Manager 1 | 6 | 24 | 200 |

| NSX Manager 2 | 6 | 24 | 200 |

| NSX Manager 3 | 6 | 24 | 200 |

| vCenter Appliance | 4 | 16 | 290 |

| TOTAL | 31 | 128 | 1.38 TB |

Note: The NSX Manager resource requirements are based on the medium size VM, which is the minimum recommended form factor for NSX-T v3.0 and later. For more information, see NSX Manager VM and Host Transport Node System Requirements.

Storage Requirements for Large Numbers of Pods

If you expect the cluster workload to run a large number of pods continuously, then increase the size of persistent disk storage allocated to the TKGI Database VM as follows:

| Number of Pods | Persistent Disk Requirements (GB) |

|---|---|

| 1,000 pods | 20 |

| 5,000 pods | 100 |

| 10,000 pods | 200 |

| 50,000 pods | 1,000 |

NSX Edge Node VMs and Sizes

The following table lists the resource requirements for each VM in the Edge Cluster.

| VM | CPU Cores | Memory (GB) | Ephemeral Disk (GB) |

|---|---|---|---|

| NSX Edge Node 1 | 8 | 32 | 120 |

| NSX Edge Node 2 | 8 | 32 | 120 |

| TOTAL | 16 | 64 | 240 |

Note: NSX-T 3.0 Edge Nodes can be deployed on Intel and AMD-based hosts.

Kubernetes Cluster Nodes VMs and Sizes

The following table lists sizing information for Kubernetes cluster node VMs. The size and resource consumption of these VMs are configurable in the Plans section of the Tanzu Kubernetes Grid Integrated Edition tile.

| VM | CPU Cores | Memory (GB) | Ephemeral Disk (GB) | Persistent Disk |

|---|---|---|---|---|

| Control&nbsb;Plane Nodes | 1 to 16 | 1 to 64 | 8 to 256 | 1 GB to 32 TB |

| Worker Nodes | 1 to 16 | 1 to 64 | 8 to 256 | 1 GB to 32 TB |

For illustrative purposes, consider the following example control plane node and a worker node requirements:

| VM | CPU Cores | Memory (GB) | Ephemeral Disk (GB) | Persistent Disk (GB) |

|---|---|---|---|---|

| Control Plane Node | 2 | 8 | 64 | 128 |

| Worker Node | 4 | 16 | 64 | 256 |

The following are the requirements for an example environment consisting of two Kubernetes clusters, each cluster consisting of three of the control plane nodes and five of the worker nodes described above:

| VM | CPU Cores | Memory (GB) | Ephemeral Disk | Persistent Disk |

|---|---|---|---|---|

| Control Plane Nodes (3 nodes) |

6 | 24 | 192 GB | 384 GB |

| Worker Nodes (5 nodes) |

20 | 80 | 320 GB | 1280 GB |

| TOTAL (2 clusters) |

52 | 208 | 1.0 TB | 3.4 TB |

Hardware Requirements

The following tables list the hardware requirements for RDP and MPD topologies for Tanzu Kubernetes Grid Integrated Edition on vSphere with NSX.

RPD Hardware Requirements

The following table lists the hardware requirements for the RPD with vSAN topology.

| VM | VM Count | CPU Cores (with HT) | Memory (GB) | NICS | Shared Persistent Disk (TB) |

|---|---|---|---|---|---|

| Management/ Edge Cluster | 3 | 16 | 98 | 2x 10GbE | 1.5 |

| Compute cluster1 (AZ1) | 3 | 6 | 48 | 2x 10GbE | 192 |

| Compute cluster2 (AZ2) | 3 | 6 | 48 | 2x 10GbE | 192 |

| Compute cluster3 (AZ3) | 3 | 6 | 48 | 2x 10GbE | 192 |

Note: The CPU Cores values assume the use of hyper-threading (HT).

The following table lists the hardware requirements for the RPD without vSAN topology.

| VM | VM Count | CPU Cores (with HT) | Memory (GB) | NICS | Shared Persistent Disk (TB) |

|---|---|---|---|---|---|

| Management/ Edge Cluster | 3 | 16 | 98 | 2x 10GbE | 1.5 |

| Compute cluster1 (AZ1) | 2 | 10 | 70 | 2x 10GbE | 192 |

| Compute cluster2 (AZ2) | 2 | 10 | 70 | 2x 10GbE | 192 |

| Compute cluster3 (AZ3) | 2 | 10 | 70 | 2x 10GbE | 192 |

Note: The CPU Cores values assume the use of hyper-threading (HT).

MPD Hardware Requirements

The following table lists the hardware requirements for the MPD topology with a single (collapsed) cluster for all Management, Edge, and Compute nodes.

| VM | VM Count | CPU Cores (with HT) | Memory (GB) | NICS | Shared Persistent Disk (TB) |

|---|---|---|---|---|---|

| Collapsed Cluster | 3 | 32 | 236 | 4x 10GbE or 2x pNICs* | 5.9 |

*If necessary, you can use two pNICs. For more information, see Deploy a Fully Collapsed vSphere Cluster NSX on Hosts Running N-VDS Switches in the VMware NSX documentation.

Adding Hardware Capacity

To add hardware capacity to your Tanzu Kubernetes Grid Integrated Edition environment on vSphere, do the following:

-

Add one or more ESXi hosts to the vSphere compute cluster. For more information, see the VMware vSphere documentation.

-

Prepare each newly added ESXi host so that it becomes an ESXi transport node for NSX. For more information, see Configure vSphere Networking for ESXi Hosts in Installing and Configuring NSX-T Data Center v3.0 for TKGI.